使用逻辑回归模型预测用户购买会员意向

一、背景

会员付费模式是互联网中常用的变现方式,并具有高用户忠诚度和粘性,帮助电商应用增加收入的优点。会员的销售模式,依赖于线下会销+线上直播+代理商电话销售的模式。为使用户有良好的用户体验,以及满足精细化运营的需求,如何在海量用户中筛选出有价值的用户成为会员转化运营工作的重点。 因此,本文采用了逻辑回归的算法,使用用户在平台上的行为数据特征(登录、协议、商品、交易等),通过模型预测出用户购买会员的概率,对于预测结果有较大概率购买会员的用户,进行重点触达,提高交易转化。

图一:产品会员页面

二、方案设计

2.1 模型选择

用户会员购买预测场景是分类预测场景,预测的目标为用户是否会购买会员。方案选择逻辑回归模型,因为该模型的业务可解释性较强,训练完的模型可以输出线性预测公式,对后续业务场景分析有较大价值。

逻辑回归模型是一种线性回归分析模型,是常用的分类模型选择之一。 以此次预测为例,用户分为两组,一组为购买了会员的用户,另一组为未购买过会员的用户,两组用户必然具有一些数据指标表现上的差异。因此预测的因变量(y)为用户是否会购买,值为“是”或“否”,自变量(x)为一系列衡量用户平台表现的指标,如 7 天内登录天数、月均交易额等,然后通过逻辑回归分析,可以得到自变量的权重,从而可以大致了解到底哪些因素是影响用户是否购买会员的关键因素。同时根据该权值可以根据关键因素预测一个用户购买会员可能性。

2.2 方案实施步骤

模型的主要分析以及部署流程如下:

- 特征工程

- 数据预处理

- 模型生成与评估

- 模型预测

- 模型效果回收

三、方案实施

3.1 特征选择

根据会员包含的权益与对用户业务的理解,本方案挑选如图所示的下列特征,作为模型初始的投入特征。其中值得注意的是,对于目前已购买了会员的用户(正样本),其特征的计算周期为其购买会员前的一段时间,而对于目前未购买会员的用户(负样本),其特征的计算周期为当前时间往前的一段时间。

3.2 数据预处理

数据收集

主要通过 sql 对海量数据内容进行组织合并与统计,将上述指标按照列进行排布。

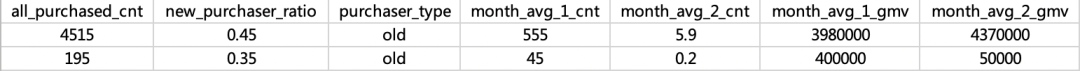

测试数据如下:

不均衡样本处理

普通情况下,未购买会员的用户样本(负样本) 会在数据量级上 多于购买了会员的用户样本(正样本),正负样本严重不平衡,所以首先对不均衡的样本进行处理。

import numpy as np

import pandas as pd

#df_vip 会员样本

#df_non_vip 非会员样本

df_non_vip = df_non_vip.sample(n=df_vip.shape[0], frac=None, replace=False, weights=None, random_state=555, axis=0)#根据会员样本的数量,对非会员样本进行随机抽样处理

df = pd.concat([df_vip , df_non_vip])

3.3 模型生成与评估

有效特征筛选

在 Xmind 梳理的 47 的特征当中,根据多轮测试,去除共线性高的特征变量后,保留了以下变量为输入模型的最终特征变量,预测目标变量为是否为用户会员( 1/0 二分类)。

#特征变量:

feature_columns = [

'_7d_login_days',

'_7d_login_cnt',

'buy_service_cnt',

'self_visit_days',

'protocol_cnt',

'month_avg_wc_trd_yuan'

]

#预测目标变量:

target_columns = ['is_vip']

columns=feature_columns+target_columns

feature_df = df[columns]

拆分训练集与测试集

from sklearn.model_selection import train_test_split

X_columns = feature_columns

Y_columns = target_columns

X_train = df[X_columns].values

Y_train = df[Y_columns].values.ravel()

train_x, test_x, train_y, test_y = train_test_split(X_train, Y_train, test_size = 0.3,random_state=666)

逻辑回归模型训练

不做任何调参的基础上,模型预测的准确率(accuracy)为 0.73,精确率(precision)为 0.75,召回率(recall)为0.72。以此为 baseline 模型

from sklearn.linear_model import LogisticRegression

log_reg = LogisticRegression(class_weight='balanced',C=1,penalty='l2',max_iter=2000,random_state=666)

log_reg.fit(train_x, train_y)

pred_y_log = log_reg.predict(test_x)

pred_y_proba_log = log_reg.predict_proba(test_x)

网格搜索调参

通过网格搜索调整模型参数,以达到模型最好的预测效果。本方案中网格搜索的优化目标选择为召回率(recall),经网格搜索后,recall 最高可达0.98,对应的超参数 C=0.0001,penalty 为 l2。但过高的召回率势必牺牲了一定的精确率,后续还需要调整模型与判断阈值,以达到召回率与精确率的平衡。

from sklearn.model_selection import GridSearchCV #网格搜索

from sklearn.model_selection import StratifiedKFold #交叉验证

model = LogisticRegression(class_weight='balanced',max_iter=2000,random_state=666)

C=[0.0001,0.001,0.01,0.1,1,10,100,1000]

penalty=['l1','l2']

param_grid=dict(C=C,penalty=penalty)

kfold = StratifiedKFold(n_splits=10,shuffle=True,random_state=666)#将训练/测试数据集划分10个互斥子集,

grid_search = GridSearchCV(model,param_grid=param_grid,scoring='recall',cv=kfold,n_jobs=-1)

grid_result = grid_search.fit(train_x,train_y)

print("Best: %f using %s" % (grid_result.best_score_,grid_search.best_params_))

means = grid_result.cv_results_['mean_test_score']

params = grid_result.cv_results_['params']

for mean,param in zip(means,params):

print("%f with: %r" % (mean,param))

确定最佳阈值

经过调参后的模型最大 KS 为 0.68,对正负样本有较好的区分度。模型的最佳阈值为 0.555(模型默认阈值 0.5),根据网格搜索的结果,以及最佳阈值,重新进行模型训练。

#计算ks

fpr, tpr, thresholds = roc_curve(test_y, pred_y_proba_log[:,1],pos_label=1)

KS_max=0

best_thr=0

for i in range(len(fpr)):

if(i==0):

KS_max=tpr[i]-fpr[i]

best_thr=thresholds[i]

elif (tpr[i]-fpr[i]>KS_max):

KS_max = tpr[i] - fpr[i]

best_thr = thresholds[i]

print('最大KS为:',KS_max)

print('最佳阈值为:',best_thr)

#重新训练模型

log_reg = LogisticRegression(class_weight='balanced', C=0.0001,penalty='l2',max_iter=2000,random_state=666)

log_reg.fit(train_x, train_y)

#pred_y_log = log_reg.predict(test_x)

pred_y_proba_log = log_reg.predict_proba(test_x)

pred_y_log = [1 if prob >= 0.555 else 0 for prob in pred_y_proba_log[:,1]]

模型评估

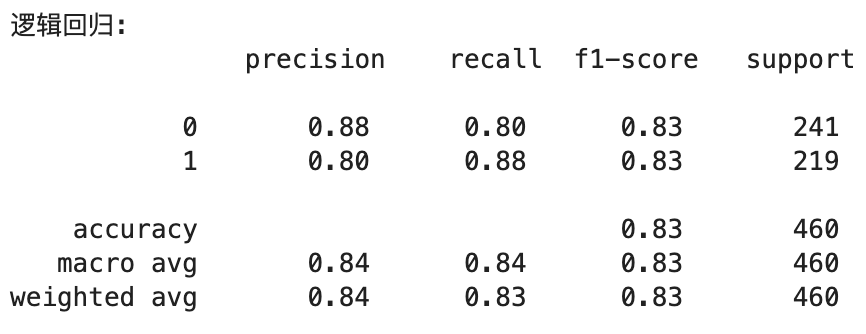

准确率、精确率、召回率、F1 测试集样本经过模型预测后得到的准确率、精确率、召回率与 F1 分别为 0.83、0.80、0.88 与 0.83,均在 0.8 以上 准确率:accuracy = (TP+TN) / (TP+TN+FP+FN) 精确率:precision = TP / (TP+FP) 召回率:recall = TP / (TP+FN) F1 = (2 _ precision _ recall) / (precison+recall)

from sklearn.metrics import classification_report

print('逻辑回归: \n',classification_report(test_y, pred_y_log))

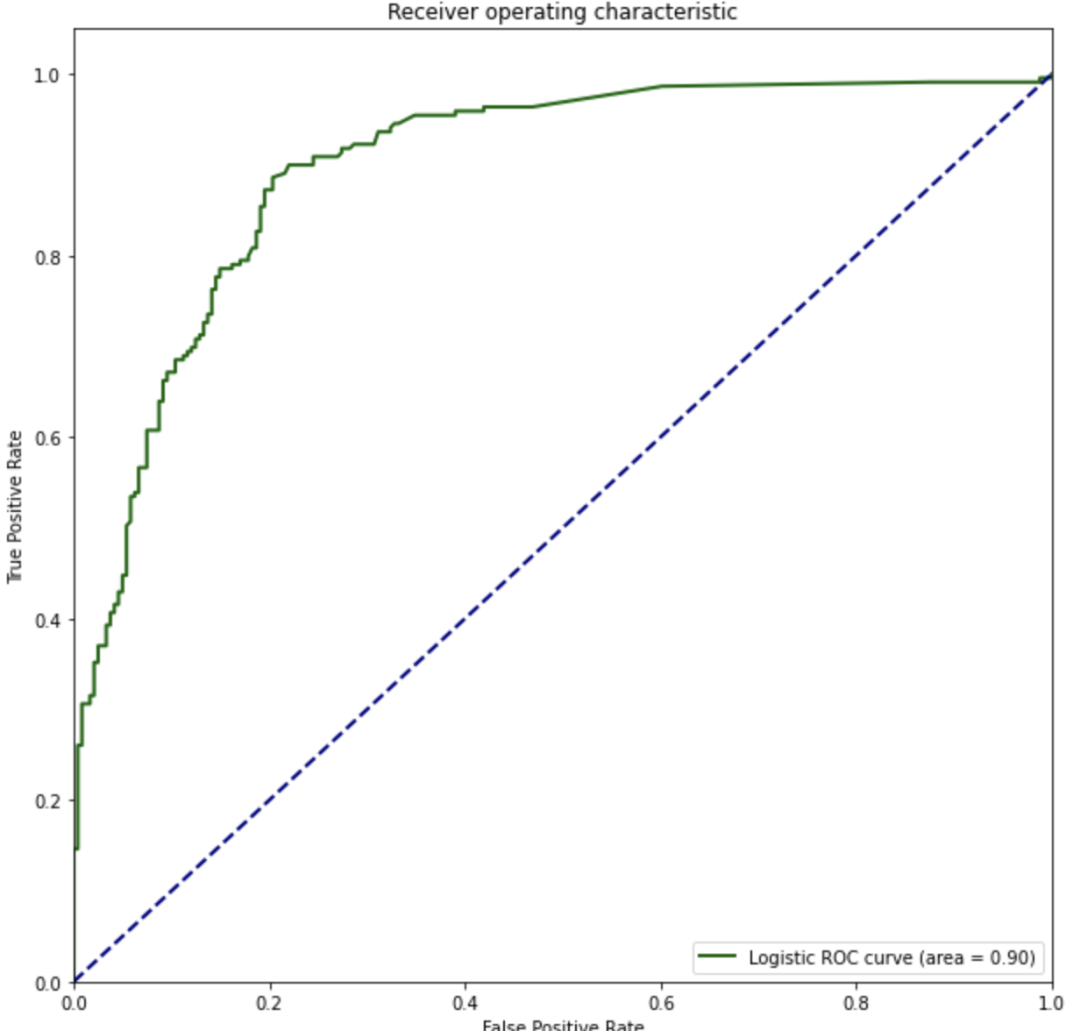

ROC 与 AUC 测试集样本经过模型预测后得到的 AUC 达 0.9

from sklearn.metrics import roc_curve,auc

pred_y_proba_lst = [pred_y_proba_log]

color = ['darkgreen']

model_name = ['Logistic']

def draw_roc_graph(pred_y_proba_lst,color,model_name):

plt.figure()

lw = 2

plt.figure(figsize=(10,10))

for i,pred_y_proba in enumerate(pred_y_proba_lst):

fpr, tpr, thresholds = roc_curve(test_y, pred_y_proba[:,1],pos_label=1)

roc_auc = auc(fpr,tpr)

plt.plot(fpr, tpr, color=color[i],lw=lw, label='%s ROC curve (area = %0.2f)' % (model_name[i],roc_auc)) ###假 正率为横坐标,真正率为纵坐标做曲线

plt.plot([0, 1], [0, 1], color='navy', lw=lw, linestyle='--')

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('Receiver operating characteristic')

plt.legend(loc="lower right")

plt.show()

draw_roc_graph(pred_y_proba_lst,color,model_name)

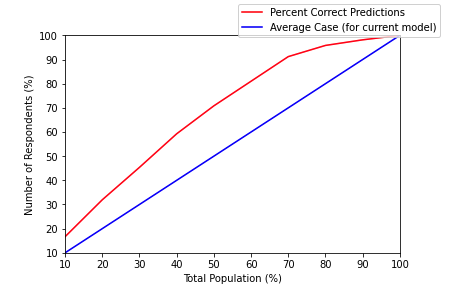

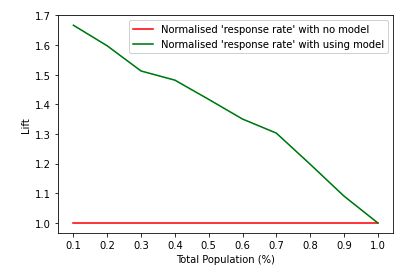

Lift 与 Gain 测试集样本经过模型预测后得到的 Lift 在 1.22~1.67 之间

#计算lift

def calc_cumulative_gains(df: pd.DataFrame, actual_col: str, predicted_col:str, probability_col:str):

df.sort_values(by=probability_col, ascending=False, inplace=True)

subset = df[df[predicted_col] == True]

rows = []

for group in np.array_split(subset, 10):

score = accuracy_score(group[actual_col].tolist(),group[predicted_col].tolist(),normalize=False)

rows.append({'NumCases': len(group), 'NumCorrectPredictions': score})

lift = pd.DataFrame(rows).reset_index()

lift['percent']=lift['index'].apply(lambda x: (x+1)/10)

#Cumulative Gains Calculation

lift['RunningCorrect'] = lift['NumCorrectPredictions'].cumsum()

lift['PercentCorrect'] = lift.apply(lambda x: (100 / lift['NumCorrectPredictions'].sum())*x['RunningCorrect'], axis=1)

lift['CumulativeCorrectBestCase'] = lift['NumCases'].cumsum()

lift['AvgCase'] = lift['NumCorrectPredictions'].sum() / len(lift)

lift['CumulativeAvgCase'] = lift['AvgCase'].cumsum()

lift['PercentAvgCase'] = lift['CumulativeAvgCase'].apply(

lambda x: (100 / lift['NumCorrectPredictions'].sum()) * x)

#Lift Chart

lift['NormalisedPercentAvg'] = 1

lift['NormalisedPercentWithModel'] = lift['PercentCorrect'] / lift['PercentAvgCase']

return lift

#绘制gain图

def plot_cumulative_gains(lift: pd.DataFrame):

fig, ax = plt.subplots()

fig.canvas.draw()

handles = []

handles.append(ax.plot(lift['PercentCorrect'], 'r-', label='Percent Correct Predictions'))

handles.append(ax.plot(lift['PercentAvgCase'], 'b-', label='Average Case (for current model)'))

ax.set_xlabel('Total Population (%)')

ax.set_ylabel('Number of Respondents (%)')

ax.set_xlim([0, 9])

ax.set_ylim([10, 100])

labels = [int((label+1)*10) for label in range(10)]

ax.set_xticklabels(labels)

fig.legend(handles, labels=[h[0].get_label() for h in handles])

fig.show()

#绘制lift图

def plot_lift_chart(lift: pd.DataFrame):

plt.figure()

plt.plot(lift['percent'],lift['NormalisedPercentAvg'], 'r-', label='Normalised \'response rate\' with no model')

plt.plot(lift['percent'],lift['NormalisedPercentWithModel'], 'g-', label='Normalised \'response rate\' with using model')

plt.xticks([0.1,0.2,0.3,0.4,0.5,0.6,0.7,0.8,0.9,1.0])

plt.legend()

plt.xlabel('Total Population (%)')

plt.ylabel('Lift')

plt.show()

lift=calc_cumulative_gains(result_df,'actual_y','pred_y_log','pred_proba_log')

plot_lift_chart(lift)

plot_cumulative_gains(lift)

3.4 模型预测

通过大数据调度工具预计 pyspark 部署模型,使得模型可以适配海量数据,并进行动态更新。预测数据需要推送至用户 CRM、标签系统等下游业务需求方。

from pyhive import presto

import numpy as np

import pandas as pd

import os

import datetime

from datetime import timedelta

import time

from collections import defaultdict,Counter

import re

import json

import sys

import pyhdfs

from math import log

import sklearn

from sklearn import preprocessing

from sklearn.preprocessing import OneHotEncoder

from sklearn.preprocessing import StandardScaler

from sklearn.feature_extraction import DictVectorizer

from sklearn.feature_selection import SelectKBest,f_classif

from sklearn.cluster import KMeans

from sklearn import tree

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import StratifiedKFold #交叉验证

from sklearn.model_selection import GridSearchCV #网格搜索

from sklearn.model_selection import train_test_split #将数据集分开成训练集和测试集

from sklearn.model_selection import learning_curve

from sklearn.metrics import (accuracy_score, brier_score_loss, precision_score, recall_score,f1_score)

from sklearn.metrics import confusion_matrix

from sklearn.metrics import classification_report

from sklearn.metrics import roc_curve,auc

from pyspark.sql import SparkSession

from pyspark.sql.functions import udf, col,lit, monotonically_increasing_id

from pyspark.sql.types import StringType,ArrayType

import pickle

#################################### 二、特征数据读入 ####################################

# 目前非会员供应商数据特征

df_total = spark.sql('select * from ads.ads_non_vip_supplier_feature_for_predict').toPandas()

#################################### 三、特征数据处理 ####################################

# 数据特征类型处理

df_total['vip_first_created_date']=pd.to_datetime(df_total['vip_first_created_date'])

df_total['gmt_created_date']=pd.to_datetime(df_total['gmt_created_date'])

df_total['first_settled_date']=pd.to_datetime(df_total['first_settled_date'])

df_total['new_opt_ratio']=df_total['new_opt_ratio'].astype('float')

df_total['_30d_active_opt_ratio']=df_total['_30d_active_opt_ratio'].astype('float')

df_total['new_purchaser_ratio']=df_total['new_purchaser_ratio'].astype('float')

df_total['supplier_org_id']=df_total['supplier_org_id'].astype('str')

# 用户商品库数据特征

sql1="""

略

"""

df1 = spark.sql(sql1).toPandas()

second_back_category_id_set = set(df1.second_back_category_id_list.tolist()[0].split(','))

def cal_intersection_rate(x):

if x == None:

return(0)

else:

a = set(x.split(','))

intersection_rate = len(second_back_category_id_set.intersection(a))/len(a)

return(intersection_rate)

df_total['category_intersection_rate']=df_total['x'].apply(lambda x:cal_intersection_rate(x))

# GMV数据log处理

df_total['log_month_avg_wc_GMV_yuan']=df_total['month_avg_wc_GMV_yuan'].apply(lambda x:log(x) if x!=0 else 0)

print('数据体量:',df_total.shape)

#################################### 四、模型预测 ####################################

# 模型所需特征

feature_columns = [

'_7d_login_days',

'_7d_login_cnt',

'buy_service_cnt',

'self_visit_days',

'protocol_cnt',

'month_avg_wc_GMV_yuan']

target_columns = ['is_vip']

columns=feature_columns+target_columns

X_columns = feature_columns

Y_columns = target_columns

X_train = df_total[X_columns].values

Y_train = df_total[Y_columns].values.ravel()

Y_pred = clf.predict(X_train)

Y_pred_proba = clf.predict_proba(X_train)

Y_pred = [1 if prob >= 0.555 else 0 for prob in Y_pred_proba[:,1]]

print('模型所需特征:',X_columns)

print("模型公式参数:",sorted([*zip(X_columns,clf.coef_.ravel())],key=lambda x:x[1],reverse=True))

df_pred_result=pd.concat([pd.DataFrame(Y_pred ,columns = ['pred_y']),

pd.DataFrame(Y_pred_proba[:,1],columns=['pred_proba'])]

,axis=1)

df_pred_result['model_name']=MODEL_NAME.split('.')[0]

df_id = df_total.reset_index()[['supplier_org_id']]

df_pred_result = df_id.reset_index().merge(df_pred_result.reset_index(),on = 'index',how='left')

#################################### 五、 用户脱敏信息透出 ##############################

# 是否登录活跃

df_total['is_active_login'] = df_total.apply(lambda row: 1 if row['is_7d_login']== 1 and

row['_30d_login_days']>=7 else 0,axis =1)

# 是否高GMV

gmv_median = df_total[df_total['all_GMV_yuan']!=0]['all_GMV_yuan'].median()

df_total['is_high_gmv'] = df_total.apply(lambda row: 1 if row['all_GMV_yuan']>= gmv_median else 0,axis =1)

# 是否高商品数

item_cnt_median = df_total[df_total['on_shelf_item_cnt']!=0]['on_shelf_item_cnt'].median()

df_total['is_high_item_cnt'] = df_total.apply(lambda row: 1 if row['z']>= item_cnt_median else 0,axis =1)

# 是否常浏览自家店铺

self_visit_days_median = df_total[df_total['self_visit_days']!=0]['self_visit_days'].median()

df_total['is_freq_visit_self_shop'] = df_total.apply(lambda row: 1 if row['self_visit_days']>=

self_visit_days_median else 0,axis =1)

# 推荐理由

df_total['recommend_reson']=df_total.apply(lambda row: ('登录活跃,' if row['is_active_login'] == 1 else '')

+('销售额高,' if row['is_high_gmv'] == 1 else '')\

+('上架商品数量多,' if row['is_high_item_cnt'] == 1 else '')\

+('注重店铺运营' if row['is_freq_visit_self_shop'] == 1 else ''),axis =1)

df_final=df_total.merge(df_pred_result,on='supplier_org_id',how='left')

df_final = df_final[df_final['pred_y']==1]

3.5 模型效果回收

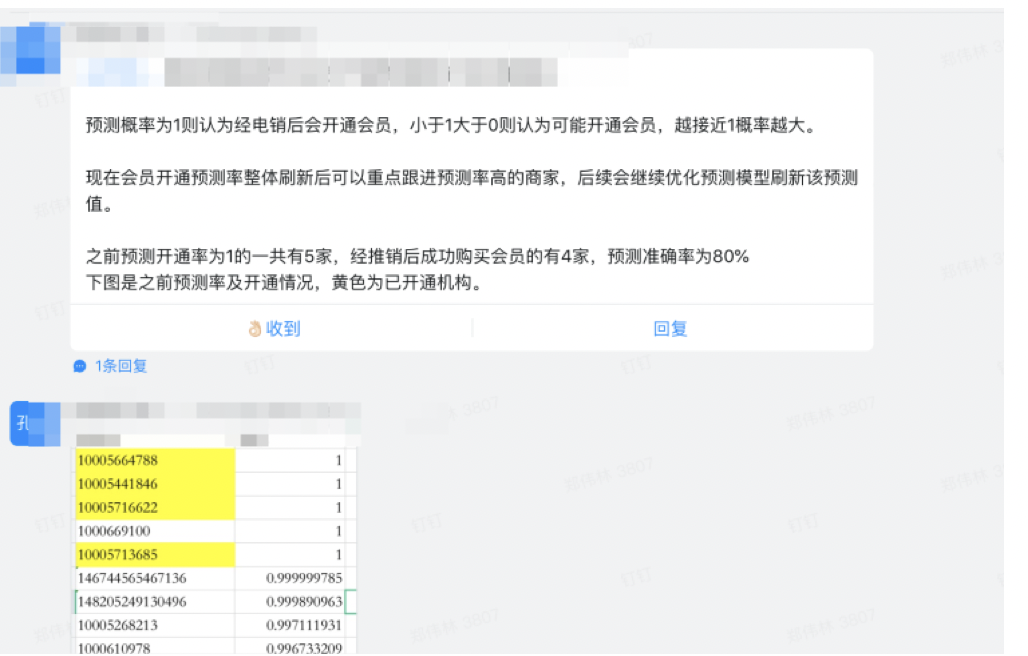

模型上线后的实际效果、准确率的评估,结合业务部门内部触达数据反映出模型效果良好。

3.6 结语

此次使用逻辑回归的算法,首先得出的结果能够赋能业务,业务同学反映预测模型结果准确率较高。其次通过此次模型筛选出了对会员购买贡献度高的特征值。后续可以通过促进特征值的方法进行扩大用户群体。后续还会继续迭代优化相关算法。后续考虑结合用户分层与用户画像连接 CRM 系统进行用户进驻营销与批量触达。

本文参与 腾讯云自媒体同步曝光计划,分享自微信公众号。

原始发表:2023-10-09,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录