Intel图形硬件H264 MFT ProcessInput调用在输入少量示例后失败,同样适用于Nvidia硬件MFT。

我使用DesktopDuplication API捕获桌面,并在GPU中将示例从RGBA转换为NV12,并将其提供给MediaFoundation硬件H264 MFT。这对Nvidia图形和软件编码器都很好,但在只有英特尔图形硬件MFT可用时会失败。如果我回到Software,代码在相同的英特尔图形机器上可以很好地工作。我还确保编码实际上是在Nvidia图形机器上的硬件中完成的。

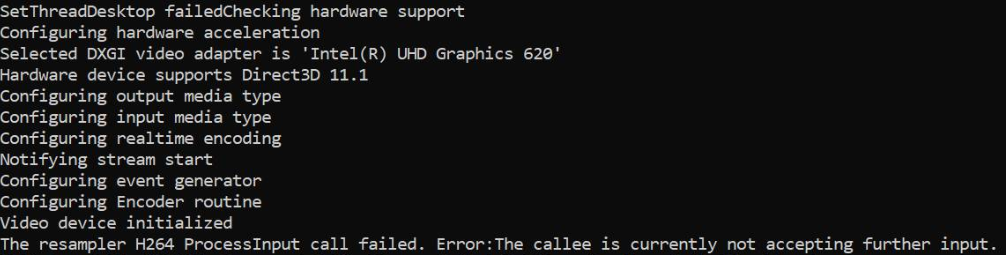

在Intel图形上,MFT返回MEError (“未指定的错误”),这仅在第一个示例被输入之后才发生,随后对ProcessInput (当事件生成器触发METransformNeedInput)的调用返回“被叫者目前不接受进一步的输入”。在返回这些错误之前,MFT很少会消耗更多的样本。这种行为令人困惑,只有当事件生成器通过METransformNeedInput异步触发IMFAsyncCallback时,我才会输入一个示例,并且正确地检查是否在输入一个示例时立即触发METransformHaveOutput。当相同的异步逻辑在Nvidia硬件、MFT和Microsoft软件编码器上正常工作时,我真的很困惑。

英特尔论坛本身也有类似的未解决问题。我的代码类似于英特尔线程中提到的代码,只不过我还将d3d设备管理器设置为如下所示的编码器。

另外,还有另外三个堆栈溢出线程报告了类似的问题,但没有给出解决方案(失败 & 如何从英特尔MFT编码器的IMFSample纹理中创建D11 & 异步MFT不发送MFTransformHaveOutput事件(英特尔硬件MJPEG解码器MFT))。我已经尝试了每一种可能的选择,但在这方面没有任何改进。

彩色转换器码是从英特尔媒体sdk样本中提取的。我还上传了完整的代码这里。

方法设置d3d管理器:

void SetD3dManager() {

HRESULT hr = S_OK;

if (!deviceManager) {

// Create device manager

hr = MFCreateDXGIDeviceManager(&resetToken, &deviceManager);

}

if (SUCCEEDED(hr))

{

if (!pD3dDevice) {

pD3dDevice = GetDeviceDirect3D(0);

}

}

if (pD3dDevice) {

// NOTE: Getting ready for multi-threaded operation

const CComQIPtr<ID3D10Multithread> pMultithread = pD3dDevice;

pMultithread->SetMultithreadProtected(TRUE);

hr = deviceManager->ResetDevice(pD3dDevice, resetToken);

CHECK_HR(_pTransform->ProcessMessage(MFT_MESSAGE_SET_D3D_MANAGER, reinterpret_cast<ULONG_PTR>(deviceManager.p)), "Failed to set device manager.");

}

else {

cout << "Failed to get d3d device";

}

}Getd3ddevice:

CComPtr<ID3D11Device> GetDeviceDirect3D(UINT idxVideoAdapter)

{

// Create DXGI factory:

CComPtr<IDXGIFactory1> dxgiFactory;

DXGI_ADAPTER_DESC1 dxgiAdapterDesc;

// Direct3D feature level codes and names:

struct KeyValPair { int code; const char* name; };

const KeyValPair d3dFLevelNames[] =

{

KeyValPair{ D3D_FEATURE_LEVEL_9_1, "Direct3D 9.1" },

KeyValPair{ D3D_FEATURE_LEVEL_9_2, "Direct3D 9.2" },

KeyValPair{ D3D_FEATURE_LEVEL_9_3, "Direct3D 9.3" },

KeyValPair{ D3D_FEATURE_LEVEL_10_0, "Direct3D 10.0" },

KeyValPair{ D3D_FEATURE_LEVEL_10_1, "Direct3D 10.1" },

KeyValPair{ D3D_FEATURE_LEVEL_11_0, "Direct3D 11.0" },

KeyValPair{ D3D_FEATURE_LEVEL_11_1, "Direct3D 11.1" },

};

// Feature levels for Direct3D support

const D3D_FEATURE_LEVEL d3dFeatureLevels[] =

{

D3D_FEATURE_LEVEL_11_1,

D3D_FEATURE_LEVEL_11_0,

D3D_FEATURE_LEVEL_10_1,

D3D_FEATURE_LEVEL_10_0,

D3D_FEATURE_LEVEL_9_3,

D3D_FEATURE_LEVEL_9_2,

D3D_FEATURE_LEVEL_9_1,

};

constexpr auto nFeatLevels = static_cast<UINT> ((sizeof d3dFeatureLevels) / sizeof(D3D_FEATURE_LEVEL));

CComPtr<IDXGIAdapter1> dxgiAdapter;

D3D_FEATURE_LEVEL featLevelCodeSuccess;

CComPtr<ID3D11Device> d3dDx11Device;

std::wstring_convert<std::codecvt_utf8<wchar_t>> transcoder;

HRESULT hr = CreateDXGIFactory1(IID_PPV_ARGS(&dxgiFactory));

CHECK_HR(hr, "Failed to create DXGI factory");

// Get a video adapter:

dxgiFactory->EnumAdapters1(idxVideoAdapter, &dxgiAdapter);

// Get video adapter description:

dxgiAdapter->GetDesc1(&dxgiAdapterDesc);

CHECK_HR(hr, "Failed to retrieve DXGI video adapter description");

std::cout << "Selected DXGI video adapter is \'"

<< transcoder.to_bytes(dxgiAdapterDesc.Description) << '\'' << std::endl;

// Create Direct3D device:

hr = D3D11CreateDevice(

dxgiAdapter,

D3D_DRIVER_TYPE_UNKNOWN,

nullptr,

(0 * D3D11_CREATE_DEVICE_SINGLETHREADED) | D3D11_CREATE_DEVICE_VIDEO_SUPPORT,

d3dFeatureLevels,

nFeatLevels,

D3D11_SDK_VERSION,

&d3dDx11Device,

&featLevelCodeSuccess,

nullptr

);

// Might have failed for lack of Direct3D 11.1 runtime:

if (hr == E_INVALIDARG)

{

// Try again without Direct3D 11.1:

hr = D3D11CreateDevice(

dxgiAdapter,

D3D_DRIVER_TYPE_UNKNOWN,

nullptr,

(0 * D3D11_CREATE_DEVICE_SINGLETHREADED) | D3D11_CREATE_DEVICE_VIDEO_SUPPORT,

d3dFeatureLevels + 1,

nFeatLevels - 1,

D3D11_SDK_VERSION,

&d3dDx11Device,

&featLevelCodeSuccess,

nullptr

);

}

// Get name of Direct3D feature level that succeeded upon device creation:

std::cout << "Hardware device supports " << std::find_if(

d3dFLevelNames,

d3dFLevelNames + nFeatLevels,

[featLevelCodeSuccess](const KeyValPair& entry)

{

return entry.code == featLevelCodeSuccess;

}

)->name << std::endl;

done:

return d3dDx11Device;

}异步回调实现:

struct EncoderCallbacks : IMFAsyncCallback

{

EncoderCallbacks(IMFTransform* encoder)

{

TickEvent = CreateEvent(0, FALSE, FALSE, 0);

_pEncoder = encoder;

}

~EncoderCallbacks()

{

eventGen = nullptr;

CloseHandle(TickEvent);

}

bool Initialize() {

_pEncoder->QueryInterface(IID_PPV_ARGS(&eventGen));

if (eventGen) {

eventGen->BeginGetEvent(this, 0);

return true;

}

return false;

}

// dummy IUnknown impl

virtual HRESULT STDMETHODCALLTYPE QueryInterface(REFIID riid, void** ppvObject) override { return E_NOTIMPL; }

virtual ULONG STDMETHODCALLTYPE AddRef(void) override { return 1; }

virtual ULONG STDMETHODCALLTYPE Release(void) override { return 1; }

virtual HRESULT STDMETHODCALLTYPE GetParameters(DWORD* pdwFlags, DWORD* pdwQueue) override

{

// we return immediately and don't do anything except signaling another thread

*pdwFlags = MFASYNC_SIGNAL_CALLBACK;

*pdwQueue = MFASYNC_CALLBACK_QUEUE_IO;

return S_OK;

}

virtual HRESULT STDMETHODCALLTYPE Invoke(IMFAsyncResult* pAsyncResult) override

{

IMFMediaEvent* event = 0;

eventGen->EndGetEvent(pAsyncResult, &event);

if (event)

{

MediaEventType type;

event->GetType(&type);

switch (type)

{

case METransformNeedInput: InterlockedIncrement(&NeedsInput); break;

case METransformHaveOutput: InterlockedIncrement(&HasOutput); break;

}

event->Release();

SetEvent(TickEvent);

}

eventGen->BeginGetEvent(this, 0);

return S_OK;

}

CComQIPtr<IMFMediaEventGenerator> eventGen = nullptr;

HANDLE TickEvent;

IMFTransform* _pEncoder = nullptr;

unsigned int NeedsInput = 0;

unsigned int HasOutput = 0;

};生成样本方法:

bool GenerateSampleAsync() {

DWORD processOutputStatus = 0;

HRESULT mftProcessOutput = S_OK;

bool frameSent = false;

// Create sample

CComPtr<IMFSample> currentVideoSample = nullptr;

MFT_OUTPUT_STREAM_INFO StreamInfo;

// wait for any callback to come in

WaitForSingleObject(_pEventCallback->TickEvent, INFINITE);

while (_pEventCallback->NeedsInput) {

if (!currentVideoSample) {

(pDesktopDuplication)->releaseBuffer();

(pDesktopDuplication)->cleanUpCurrentFrameObjects();

bool bTimeout = false;

if (pDesktopDuplication->GetCurrentFrameAsVideoSample((void**)& currentVideoSample, waitTime, bTimeout, deviceRect, deviceRect.Width(), deviceRect.Height())) {

prevVideoSample = currentVideoSample;

}

// Feed the previous sample to the encoder in case of no update in display

else {

currentVideoSample = prevVideoSample;

}

}

if (currentVideoSample)

{

InterlockedDecrement(&_pEventCallback->NeedsInput);

_frameCount++;

CHECK_HR(currentVideoSample->SetSampleTime(mTimeStamp), "Error setting the video sample time.");

CHECK_HR(currentVideoSample->SetSampleDuration(VIDEO_FRAME_DURATION), "Error getting video sample duration.");

CHECK_HR(_pTransform->ProcessInput(inputStreamID, currentVideoSample, 0), "The resampler H264 ProcessInput call failed.");

mTimeStamp += VIDEO_FRAME_DURATION;

}

}

while (_pEventCallback->HasOutput) {

CComPtr<IMFSample> mftOutSample = nullptr;

CComPtr<IMFMediaBuffer> pOutMediaBuffer = nullptr;

InterlockedDecrement(&_pEventCallback->HasOutput);

CHECK_HR(_pTransform->GetOutputStreamInfo(outputStreamID, &StreamInfo), "Failed to get output stream info from H264 MFT.");

CHECK_HR(MFCreateSample(&mftOutSample), "Failed to create MF sample.");

CHECK_HR(MFCreateMemoryBuffer(StreamInfo.cbSize, &pOutMediaBuffer), "Failed to create memory buffer.");

CHECK_HR(mftOutSample->AddBuffer(pOutMediaBuffer), "Failed to add sample to buffer.");

MFT_OUTPUT_DATA_BUFFER _outputDataBuffer;

memset(&_outputDataBuffer, 0, sizeof _outputDataBuffer);

_outputDataBuffer.dwStreamID = outputStreamID;

_outputDataBuffer.dwStatus = 0;

_outputDataBuffer.pEvents = nullptr;

_outputDataBuffer.pSample = mftOutSample;

mftProcessOutput = _pTransform->ProcessOutput(0, 1, &_outputDataBuffer, &processOutputStatus);

if (mftProcessOutput != MF_E_TRANSFORM_NEED_MORE_INPUT)

{

if (_outputDataBuffer.pSample) {

CComPtr<IMFMediaBuffer> buf = NULL;

DWORD bufLength;

CHECK_HR(_outputDataBuffer.pSample->ConvertToContiguousBuffer(&buf), "ConvertToContiguousBuffer failed.");

if (buf) {

CHECK_HR(buf->GetCurrentLength(&bufLength), "Get buffer length failed.");

BYTE* rawBuffer = NULL;

fFrameSize = bufLength;

fDurationInMicroseconds = 0;

gettimeofday(&fPresentationTime, NULL);

buf->Lock(&rawBuffer, NULL, NULL);

memmove(fTo, rawBuffer, fFrameSize > fMaxSize ? fMaxSize : fFrameSize);

bytesTransfered += bufLength;

FramedSource::afterGetting(this);

buf->Unlock();

frameSent = true;

}

}

if (_outputDataBuffer.pEvents)

_outputDataBuffer.pEvents->Release();

}

else if (MF_E_TRANSFORM_STREAM_CHANGE == mftProcessOutput) {

// some encoders want to renegotiate the output format.

if (_outputDataBuffer.dwStatus & MFT_OUTPUT_DATA_BUFFER_FORMAT_CHANGE)

{

CComPtr<IMFMediaType> pNewOutputMediaType = nullptr;

HRESULT res = _pTransform->GetOutputAvailableType(outputStreamID, 1, &pNewOutputMediaType);

res = _pTransform->SetOutputType(0, pNewOutputMediaType, 0);//setting the type again

CHECK_HR(res, "Failed to set output type during stream change");

}

}

else {

HandleFailure();

}

}

return frameSent;

}创建视频示例和颜色转换:

bool GetCurrentFrameAsVideoSample(void **videoSample, int waitTime, bool &isTimeout, CRect &deviceRect, int surfaceWidth, int surfaceHeight)

{

FRAME_DATA currentFrameData;

m_LastErrorCode = m_DuplicationManager.GetFrame(¤tFrameData, waitTime, &isTimeout);

if (!isTimeout && SUCCEEDED(m_LastErrorCode)) {

m_CurrentFrameTexture = currentFrameData.Frame;

if (!pDstTexture) {

D3D11_TEXTURE2D_DESC desc;

ZeroMemory(&desc, sizeof(D3D11_TEXTURE2D_DESC));

desc.Format = DXGI_FORMAT_NV12;

desc.Width = surfaceWidth;

desc.Height = surfaceHeight;

desc.MipLevels = 1;

desc.ArraySize = 1;

desc.SampleDesc.Count = 1;

desc.CPUAccessFlags = 0;

desc.Usage = D3D11_USAGE_DEFAULT;

desc.BindFlags = D3D11_BIND_RENDER_TARGET;

m_LastErrorCode = m_Id3d11Device->CreateTexture2D(&desc, NULL, &pDstTexture);

}

if (m_CurrentFrameTexture && pDstTexture) {

// Copy diff area texels to new temp texture

//m_Id3d11DeviceContext->CopySubresourceRegion(pNewTexture, D3D11CalcSubresource(0, 0, 1), 0, 0, 0, m_CurrentFrameTexture, 0, NULL);

HRESULT hr = pColorConv->Convert(m_CurrentFrameTexture, pDstTexture);

if (SUCCEEDED(hr)) {

CComPtr<IMFMediaBuffer> pMediaBuffer = nullptr;

MFCreateDXGISurfaceBuffer(__uuidof(ID3D11Texture2D), pDstTexture, 0, FALSE, (IMFMediaBuffer**)&pMediaBuffer);

if (pMediaBuffer) {

CComPtr<IMF2DBuffer> p2DBuffer = NULL;

DWORD length = 0;

(((IMFMediaBuffer*)pMediaBuffer))->QueryInterface(__uuidof(IMF2DBuffer), reinterpret_cast<void**>(&p2DBuffer));

p2DBuffer->GetContiguousLength(&length);

(((IMFMediaBuffer*)pMediaBuffer))->SetCurrentLength(length);

//MFCreateVideoSampleFromSurface(NULL, (IMFSample**)videoSample);

MFCreateSample((IMFSample * *)videoSample);

if (videoSample) {

(*((IMFSample **)videoSample))->AddBuffer((((IMFMediaBuffer*)pMediaBuffer)));

}

return true;

}

}

}

}

return false;

}机器中的英特尔图形驱动程序已经是最新的了。

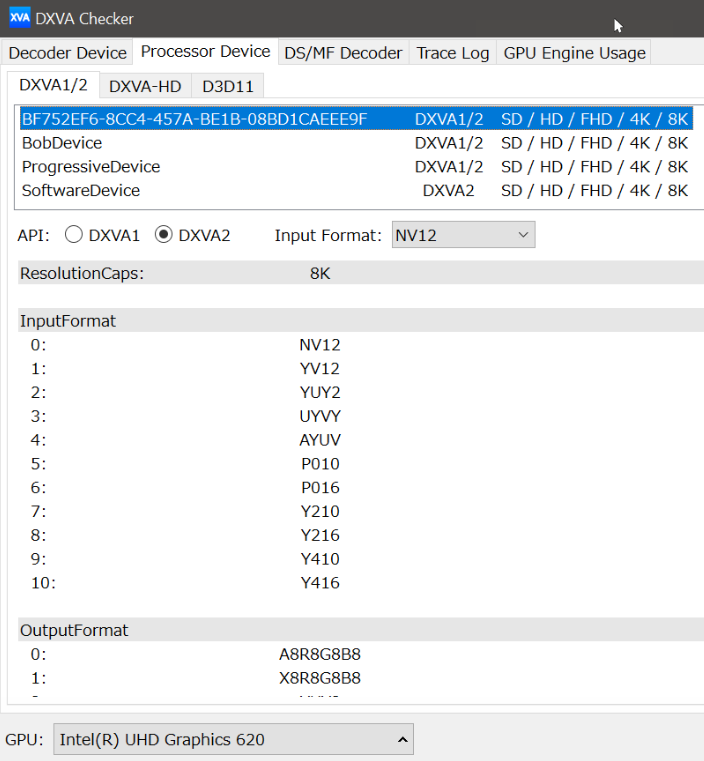

只有TransformNeedInput事件一直被触发,但是编码器抱怨它不能接受更多的输入。从未触发过TransformHaveOutput事件。

intel & msdn论坛上报道的类似问题: 1) https://software.intel.com/en-us/forums/intel-media-sdk/topic/607189 2) https://social.msdn.microsoft.com/Forums/SECURITY/en-US/fe051dd5-b522-4e4b-9cbb-2c06a5450e40/imfsinkwriter-merit-validation-failed-for-mft-intel-quick-sync-video-h264-encoder-mft?forum=mediafoundationdevelopment

更新:我已经尝试过仅仅模拟输入源(通过编程创建一个动画矩形NV12示例),而其他的东西都不动。这一次,英特尔编码器没有任何抱怨,我甚至有输出样本。除了英特尔编码器的输出视频失真,而Nvidia编码器工作非常好。

此外,我仍然得到与英特尔编码器我的原始NV12源代码的NV12错误。我对Nvidia MFT和软件编码器没有问题。

英特尔硬件MFT的输出:(请看Nvidia编码器的输出)

Nvidia硬件的输出MFT:

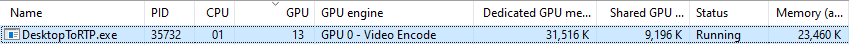

Nvidia图形使用统计数据:

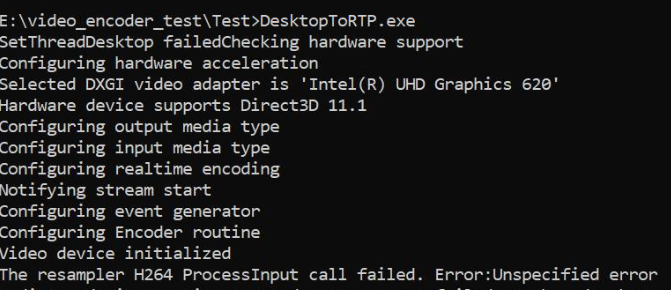

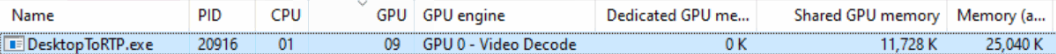

Intel图形使用统计数据(我不明白为什么GPU引擎显示为视频解码):

回答 2

Stack Overflow用户

发布于 2019-11-22 10:40:58

正如文章中提到的那样,Transform的事件生成器在Intel硬件上输入第一个示例后立即返回错误MEError (“未指定的错误”),并且进一步返回的调用"Transform需要更多的输入“,但没有产生输出。不过,同样的代码在Nvidia机器上运行得很好。经过大量的实验和研究,我发现我创建了太多的D3d11Device实例,在我的例子中,我分别创建了2到3个设备,用于捕获、颜色转换和硬件编码器。然而,我可以简单地重用一个D3dDevice实例。但是,创建多个D3d11Device实例可能会在高端机器上工作。在任何地方都没有记录。我连"MEError“错误的原因都找不到线索。什么都没提过。有许多类似于此的StackOverflow线程没有得到解答,即使是微软的人也无法指出这个问题,因为有完整的源代码。

重用D3D11Device实例解决了这个问题。张贴这个解决方案,因为它可能是有益的人谁面临同样的问题,我的。

Stack Overflow用户

发布于 2019-11-13 20:26:25

我看了你的密码。

根据你的帖子,我怀疑是英特尔的视频处理器出了问题。

我的操作系统是Win7,所以我决定在我的Nvidia卡上用D3D9Device测试视频处理器的行为,然后在4000上测试。

我认为视频处理器的功能对于D3D9Device和D3D11Device的行为都是一样的。当然有必要检查一下。

所以我制作了这个程序来检查:https://github.com/mofo7777/DirectXVideoScreen (参见D3D9VideoProcessor子项目)

似乎您没有检查足够的事情有关视频处理器的能力。

关于IDXVAHD_Device::GetVideoProcessorDeviceCaps,下面是我要检查的内容:

DXVAHD_VPDEVCAPS.MaxInputStreams >0

DXVAHD_VPDEVCAPS.VideoProcessorCount >0

DXVAHD_VPDEVCAPS.OutputFormatCount >0

DXVAHD_VPDEVCAPS.InputFormatCount >0

DXVAHD_VPDEVCAPS.InputPool == D3DPOOL_DEFAULT

我还检查了IDXVAHD_Device::GetVideoProcessorOutputFormats和IDXVAHD_Device::GetVideoProcessorInputFormats.支持的输入和输出格式。

这就是我发现Nvidia GPU和Intel GPU的区别所在。

NVIDIA :4输出格式

- D3DFMT_A8R8G8B8

- D3DFMT_X8R8G8B8

- D3DFMT_YUY2

- D3DFMT_NV12

英特尔:3种输出格式

- D3DFMT_A8R8G8B8

- D3DFMT_X8R8G8B8

- D3DFMT_YUY2

在4000上,不支持NV12输出格式。

此外,为了使程序正确工作,我需要在使用VideoProcessBltHD之前设置流状态:

- DXVAHD_STREAM_STATE_D3DFORMAT

- DXVAHD_STREAM_STATE_FRAME_FORMAT

- DXVAHD_STREAM_STATE_INPUT_COLOR_SPACE

- DXVAHD_STREAM_STATE_SOURCE_RECT

- DXVAHD_STREAM_STATE_DESTINATION_RECT

对于D3D11:

ID3D11VideoProcessorEnumerator::GetVideoProcessorCaps == IDXVAHD_Device::GetVideoProcessorDeviceCaps

( ID3D11VideoProcessorEnumerator::CheckVideoProcessorFormat == IDXVAHD_Device::GetVideoProcessorOutputFormats )

(D3D11_VIDEO_PROCESSOR_FORMAT_SUPPORT_INPUT) D3D11_VIDEO_PROCESSOR_FORMAT_SUPPORT_INPUT == IDXVAHD_Device::GetVideoProcessorInputFormats

IDXVAHD_VideoProcessor::SetVideoProcessStreamState:(.) == ==

您能否首先验证您的GPU的视频处理器功能。你和我看到的一样不同吗?

这是我们需要知道的第一件事,从我在github项目中看到的情况来看,您的程序似乎没有检查这一点。

https://stackoverflow.com/questions/58779958

复制相似问题