swift AVAudioEngine和AVAudioSinkNode sampleRate转换

我已经有一段时间与此有关的问题,并编写了以下的快速文件,可以运行作为一个应用程序的主视图控制器文件。在执行时,它将播放1 1kHz正弦波的短脉冲。它将同时从音频接口的输入记录。

目前,我已插入输出到输入进行测试。但这也可以是内置于扬声器和麦克风中的计算机(在运行应用程序之前,只需检查系统设置中的音量,因为它将自动播放)。

我不能让这个给我一个准确的结果

import UIKit

import AVFoundation

var globalSampleRate = 48000

class ViewController: UIViewController {

var micBuffer:[Float] = Array(repeating:0, count:10000)

var referenceBuffer:[Float] = Array(repeating:0, count:10000)

var running:Bool = false

var engine = AVAudioEngine()

override func viewDidLoad() {

super.viewDidLoad()

let syncQueue = DispatchQueue(label:"Audio Engine")

syncQueue.sync{

initializeAudioEngine()

while running == true {

}

engine.stop()

writetoFile(buff: micBuffer, name: "Mic Input")

writetoFile(buff: referenceBuffer, name: "Reference")

}

}

func initializeAudioEngine(){

var micBufferPosition:Int = 0

var refBufferPosition:Int = 0

let frequency:Float = 1000.0

let amplitude:Float = 1.0

let signal = { (time: Float) -> Float in

return amplitude * sin(2.0 * Float.pi * frequency * time)

}

let deltaTime = 1.0 / Float(globalSampleRate)

var time: Float = 0

let micSinkNode = AVAudioSinkNode() { (timeStamp, frames, audioBufferList) ->

OSStatus in

let ptr = audioBufferList.pointee.mBuffers.mData?.assumingMemoryBound(to: Float.self)

var monoSamples = [Float]()

monoSamples.append(contentsOf: UnsafeBufferPointer(start: ptr, count: Int(frames)))

for frame in 0..<frames {

self.micBuffer[micBufferPosition + Int(frame)] = monoSamples[Int(frame)]

}

micBufferPosition += Int(frames)

if micBufferPosition > 8000 {

self.running = false

}

return noErr

}

let srcNode = AVAudioSourceNode { _, _, frameCount, audioBufferList -> OSStatus in

let ablPointer = UnsafeMutableAudioBufferListPointer(audioBufferList)

for frame in 0..<Int(frameCount) {

let value = signal(time)

time += deltaTime

for buffer in ablPointer {

let buf: UnsafeMutableBufferPointer<Float> = UnsafeMutableBufferPointer(buffer)

buf[frame] = value

self.referenceBuffer[refBufferPosition + frame] = value

}

}

refBufferPosition += Int(frameCount)

return noErr

}

let inputFormat = engine.inputNode.inputFormat(forBus: 0)

let outputFormat = engine.outputNode.outputFormat(forBus: 0)

let nativeFormat = AVAudioFormat(commonFormat: .pcmFormatFloat32,

sampleRate: Double(globalSampleRate),

channels: 1,

interleaved: false)

let formatMixer = AVAudioMixerNode()

engine.attach(formatMixer)

engine.attach(micSinkNode)

engine.attach(srcNode)

//engine.connect(engine.inputNode, to: micSinkNode, format: inputFormat)

engine.connect(engine.inputNode, to: formatMixer, format: inputFormat)

engine.connect(formatMixer, to: micSinkNode, format: nativeFormat)

engine.connect(srcNode, to: engine.mainMixerNode, format: nativeFormat)

engine.connect(engine.mainMixerNode, to: engine.outputNode, format: outputFormat)

print("micSinkNode Format is \(micSinkNode.inputFormat(forBus: 0))")

print("inputNode Format is \(engine.inputNode.inputFormat(forBus: 0))")

print("outputNode Format is \(engine.outputNode.outputFormat(forBus: 0))")

print("formatMixer Format is \(formatMixer.outputFormat(forBus: 0))")

engine.prepare()

running = true

do {

try engine.start()

} catch {

print("Error")

}

}

}

func writetoFile(buff:[Float], name:String){

let outputFormatSettings = [

AVFormatIDKey:kAudioFormatLinearPCM,

AVLinearPCMBitDepthKey:32,

AVLinearPCMIsFloatKey: true,

AVLinearPCMIsBigEndianKey: true,

AVSampleRateKey: globalSampleRate,

AVNumberOfChannelsKey: 1

] as [String : Any]

let fileName = name

let DocumentDirURL = try! FileManager.default.url(for: .documentDirectory, in: .userDomainMask, appropriateFor: nil, create: true)

let url = DocumentDirURL.appendingPathComponent(fileName).appendingPathExtension("wav")

print("FilePath: \(url.path)")

let audioFile = try? AVAudioFile(forWriting: url, settings: outputFormatSettings, commonFormat: AVAudioCommonFormat.pcmFormatFloat32, interleaved: false)

let bufferFormat = AVAudioFormat(settings: outputFormatSettings)

let outputBuffer = AVAudioPCMBuffer(pcmFormat: bufferFormat!, frameCapacity: AVAudioFrameCount(buff.count))

for i in 0..<buff.count {

outputBuffer?.floatChannelData!.pointee[i] = Float(( buff[i] ))

}

outputBuffer!.frameLength = AVAudioFrameCount( buff.count )

do{

try audioFile?.write(from: outputBuffer!)

} catch let error as NSError {

print("error:", error.localizedDescription)

}

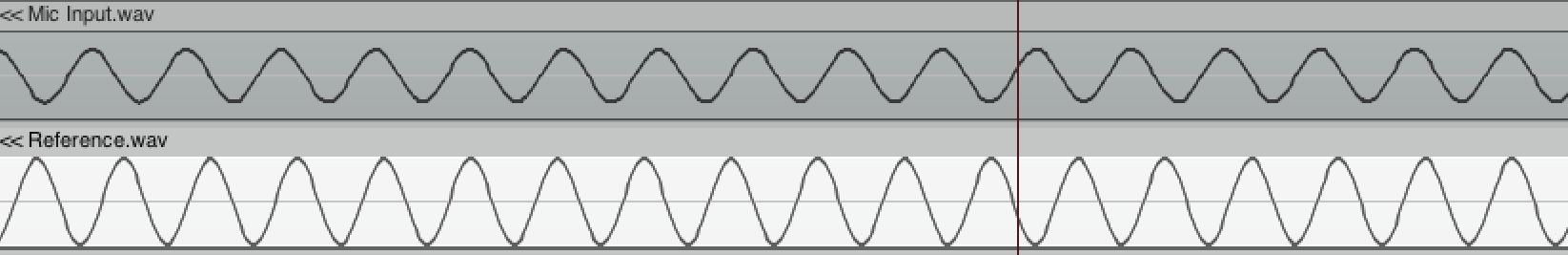

}如果我运行这个应用程序,控制台将打印出两个创建的wavs的url (一个是生成的正弦波,另一个是记录的麦克风输入)。如果我在daw中检查这些,我会得到以下信息。你可以看到这两个正弦波不同步。这使我相信样本率是不同的,但是输出到控制台的格式显示它们并没有不同。

最初,inputNode直接指向micSinkNode,但是在使用AVAudioSinkNode之前,我插入了一个AVAudioMixerNode来尝试转换格式。

其目标是能够使用任何使用自己设置运行的sampleRate硬件,并将示例保存到应用程序首选的“本地设置”中。(应用程序将在48千赫时发出嘎吱声)。我希望能够使用96k硬件,和不同的信道计数)。

有人能告诉我为什么这不管用吗?

Stack Overflow用户

发布于 2020-03-24 17:20:33

AVAudioSinkNode不支持格式转换,而不支持。

它必须以硬件输入格式处理数据。

参考资料:来自WWDC 2019,大约3分55秒,这里:https://developer.apple.com/videos/play/wwdc2019/510/

我目前很难在设备上设置一个非默认的采样率(我假设硬件支持它,因为我从可用的采样率列表中选择它),但是即使在这种非默认采样率的情况下,接收器也完全停止工作(没有崩溃,只是没有被调用)。

https://stackoverflow.com/questions/58781271

复制相似问题