为在opencv中聚在一起的多个闭合行获取单行表示

我检测到图像中的线条,并使用OpenCv C++中的HoughLinesP方法在单独的图像文件中绘制它们。以下是由此产生的图像的一部分。实际上,有数以百计的小线和细线组成一条大的单线。

但是我想要几行代表所有这些行的单行。更近的线应该合并在一起形成一条单线。例如,上面的一组线应该用下面的3条单独的线来表示。

预期的输出如下所示。如何完成这一任务。

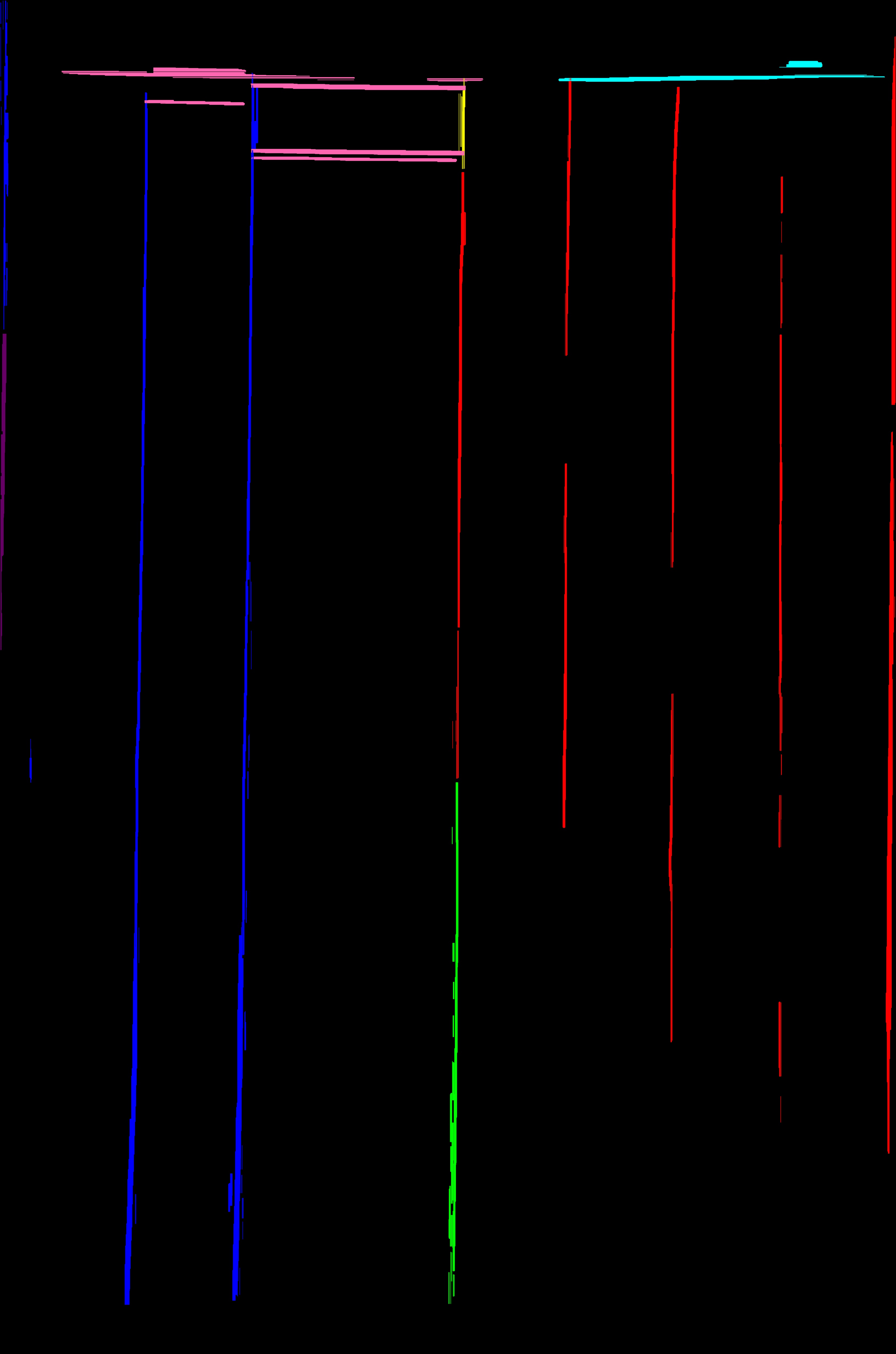

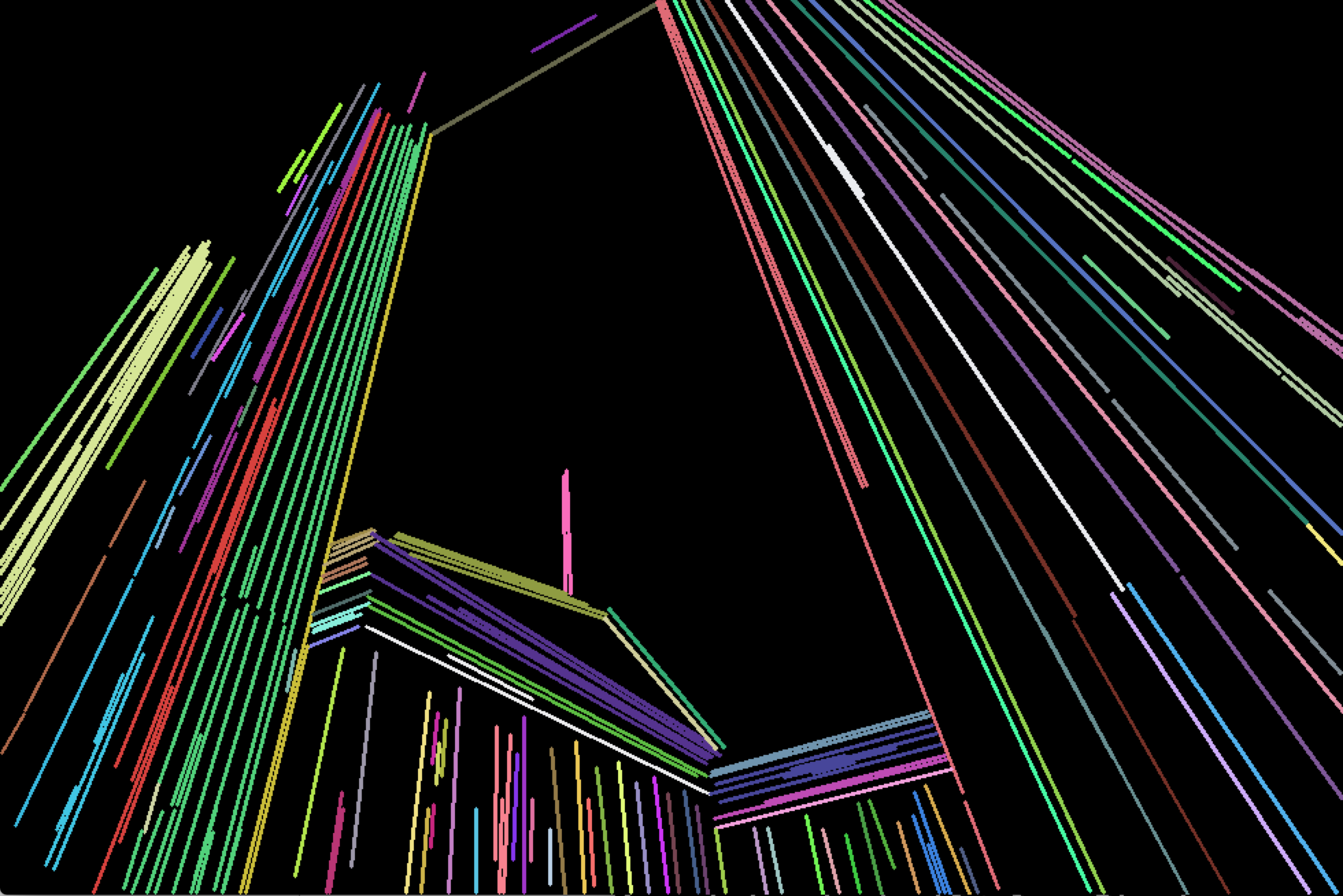

到目前为止,取得进展的原因是阿卡尔萨科夫的回答。

(产生的不同类别的线条以不同的颜色绘制)。请注意,这个结果是我正在处理的原始完整图像,但不是我在问题中使用的示例部分。

回答 6

Stack Overflow用户

发布于 2015-06-17 23:18:56

如果不知道图像中的行数,可以使用cv::partition函数在等效组中拆分行。

我建议你采取以下程序:

- 使用

cv::partition拆分您的行。您需要指定一个好的谓词函数。这实际上取决于从图像中提取的线条,但我认为应该检查以下条件:

- Angle between lines should be quite small (less 3 degrees, for example). Use [dot product](https://en.wikipedia.org/wiki/Dot_product) to calculate angle's cosine.

- Distance between centers of segments should be less than half of maximum length of two segments.

例如,它可以实现如下:

bool isEqual(const Vec4i& _l1, const Vec4i& _l2)

{

Vec4i l1(_l1), l2(_l2);

float length1 = sqrtf((l1[2] - l1[0])*(l1[2] - l1[0]) + (l1[3] - l1[1])*(l1[3] - l1[1]));

float length2 = sqrtf((l2[2] - l2[0])*(l2[2] - l2[0]) + (l2[3] - l2[1])*(l2[3] - l2[1]));

float product = (l1[2] - l1[0])*(l2[2] - l2[0]) + (l1[3] - l1[1])*(l2[3] - l2[1]);

if (fabs(product / (length1 * length2)) < cos(CV_PI / 30))

return false;

float mx1 = (l1[0] + l1[2]) * 0.5f;

float mx2 = (l2[0] + l2[2]) * 0.5f;

float my1 = (l1[1] + l1[3]) * 0.5f;

float my2 = (l2[1] + l2[3]) * 0.5f;

float dist = sqrtf((mx1 - mx2)*(mx1 - mx2) + (my1 - my2)*(my1 - my2));

if (dist > std::max(length1, length2) * 0.5f)

return false;

return true;

}我猜你的台词在vector<Vec4i> lines;里。接下来,您应该按如下方式调用cv::partition:

vector<Vec4i> lines;

std::vector<int> labels;

int numberOfLines = cv::partition(lines, labels, isEqual);您需要调用cv::partition一次,它将对所有行进行聚类。向量labels将存储它所属的集群的每一行标签。参见文档以获取cv::partition

- 当你得到所有的线组后,你应该合并它们。我建议计算组内所有线的平均角度,并估计“边界”点。例如,如果角度为零(即所有线几乎都是水平的),那么它将是最左和最右的点。只需在这几个点之间划一条线。

我注意到您的示例中的所有行都是水平的或垂直的。在这种情况下,您可以计算点,即所有段的中心和“边界”点的平均值,然后通过中心点绘制受“边界”点限制的水平或垂直线。

请注意,cv::partition需要O(N^2)时间,所以如果处理大量的行,可能需要很长时间。

我希望这会有所帮助。我用这种方法来完成类似的任务。

Stack Overflow用户

发布于 2015-06-17 22:49:21

首先,我想指出,您的原始图像是在一个轻微的角度,所以您的预期输出似乎只是有点差。我假设您可以接受那些在输出中不是100%垂直的行,因为它们稍微偏离了您的输入。

Mat image;

Mat binary = image > 125; // Convert to binary image

// Combine similar lines

int size = 3;

Mat element = getStructuringElement( MORPH_ELLIPSE, Size( 2*size + 1, 2*size+1 ), Point( size, size ) );

morphologyEx( mask, mask, MORPH_CLOSE, element );到目前为止,这会产生这样的图像:

这些线不是在90度的角度,因为原始图像不是。

您还可以选择以下几种方法来缩小线条之间的差距:

Mat out = Mat::zeros(mask.size(), mask.type());

vector<Vec4i> lines;

HoughLinesP(mask, lines, 1, CV_PI/2, 50, 50, 75);

for( size_t i = 0; i < lines.size(); i++ )

{

Vec4i l = lines[i];

line( out, Point(l[0], l[1]), Point(l[2], l[3]), Scalar(255), 5, CV_AA);

}

如果这些线条太粗,我已经成功地用:

size = 15;

Mat eroded;

cv::Mat erodeElement = getStructuringElement( MORPH_ELLIPSE, cv::Size( size, size ) );

erode( mask, eroded, erodeElement );

Stack Overflow用户

发布于 2018-07-01 08:02:09

这里是@akarsakov回答的一个精细化的基础。一个基本问题是:

节段中心之间的距离应小于两段最大长度的一半。

在视觉上很远的平行长线可能会在相同的等价类中结束(如OP的编辑所示)。

因此,我认为这一做法对我来说是合理的:

- 在

line1周围构造一个窗口(包围矩形)。 line2角与line1角足够近,line2中至少有一个点在line1的边界矩形内。

通常,图像中一个很弱的长线性特征最终会被一组线段所识别(HoughP,LSD),在它们之间有相当大的差距。为了缓解这一问题,我们的边界矩形围绕着两个方向扩展的直线,其中扩展由原始线宽的一小部分定义。

bool extendedBoundingRectangleLineEquivalence(const Vec4i& _l1, const Vec4i& _l2, float extensionLengthFraction, float maxAngleDiff, float boundingRectangleThickness){

Vec4i l1(_l1), l2(_l2);

// extend lines by percentage of line width

float len1 = sqrtf((l1[2] - l1[0])*(l1[2] - l1[0]) + (l1[3] - l1[1])*(l1[3] - l1[1]));

float len2 = sqrtf((l2[2] - l2[0])*(l2[2] - l2[0]) + (l2[3] - l2[1])*(l2[3] - l2[1]));

Vec4i el1 = extendedLine(l1, len1 * extensionLengthFraction);

Vec4i el2 = extendedLine(l2, len2 * extensionLengthFraction);

// reject the lines that have wide difference in angles

float a1 = atan(linearParameters(el1)[0]);

float a2 = atan(linearParameters(el2)[0]);

if(fabs(a1 - a2) > maxAngleDiff * M_PI / 180.0){

return false;

}

// calculate window around extended line

// at least one point needs to inside extended bounding rectangle of other line,

std::vector<Point2i> lineBoundingContour = boundingRectangleContour(el1, boundingRectangleThickness/2);

return

pointPolygonTest(lineBoundingContour, cv::Point(el2[0], el2[1]), false) == 1 ||

pointPolygonTest(lineBoundingContour, cv::Point(el2[2], el2[3]), false) == 1;

}其中linearParameters, extendedLine, boundingRectangleContour如下:

Vec2d linearParameters(Vec4i line){

Mat a = (Mat_<double>(2, 2) <<

line[0], 1,

line[2], 1);

Mat y = (Mat_<double>(2, 1) <<

line[1],

line[3]);

Vec2d mc; solve(a, y, mc);

return mc;

}

Vec4i extendedLine(Vec4i line, double d){

// oriented left-t-right

Vec4d _line = line[2] - line[0] < 0 ? Vec4d(line[2], line[3], line[0], line[1]) : Vec4d(line[0], line[1], line[2], line[3]);

double m = linearParameters(_line)[0];

// solution of pythagorean theorem and m = yd/xd

double xd = sqrt(d * d / (m * m + 1));

double yd = xd * m;

return Vec4d(_line[0] - xd, _line[1] - yd , _line[2] + xd, _line[3] + yd);

}

std::vector<Point2i> boundingRectangleContour(Vec4i line, float d){

// finds coordinates of perpendicular lines with length d in both line points

// https://math.stackexchange.com/a/2043065/183923

Vec2f mc = linearParameters(line);

float m = mc[0];

float factor = sqrtf(

(d * d) / (1 + (1 / (m * m)))

);

float x3, y3, x4, y4, x5, y5, x6, y6;

// special case(vertical perpendicular line) when -1/m -> -infinity

if(m == 0){

x3 = line[0]; y3 = line[1] + d;

x4 = line[0]; y4 = line[1] - d;

x5 = line[2]; y5 = line[3] + d;

x6 = line[2]; y6 = line[3] - d;

} else {

// slope of perpendicular lines

float m_per = - 1/m;

// y1 = m_per * x1 + c_per

float c_per1 = line[1] - m_per * line[0];

float c_per2 = line[3] - m_per * line[2];

// coordinates of perpendicular lines

x3 = line[0] + factor; y3 = m_per * x3 + c_per1;

x4 = line[0] - factor; y4 = m_per * x4 + c_per1;

x5 = line[2] + factor; y5 = m_per * x5 + c_per2;

x6 = line[2] - factor; y6 = m_per * x6 + c_per2;

}

return std::vector<Point2i> {

Point2i(x3, y3),

Point2i(x4, y4),

Point2i(x6, y6),

Point2i(x5, y5)

};

}打电话给:

std::vector<int> labels;

int equilavenceClassesCount = cv::partition(linesWithoutSmall, labels, [](const Vec4i l1, const Vec4i l2){

return extendedBoundingRectangleLineEquivalence(

l1, l2,

// line extension length - as fraction of original line width

0.2,

// maximum allowed angle difference for lines to be considered in same equivalence class

2.0,

// thickness of bounding rectangle around each line

10);

});现在,为了将每个等价类缩减为单行,我们从它构建了一个点云,并找到了一条适合的线:

// fit line to each equivalence class point cloud

std::vector<Vec4i> reducedLines = std::accumulate(pointClouds.begin(), pointClouds.end(), std::vector<Vec4i>{}, [](std::vector<Vec4i> target, const std::vector<Point2i>& _pointCloud){

std::vector<Point2i> pointCloud = _pointCloud;

//lineParams: [vx,vy, x0,y0]: (normalized vector, point on our contour)

// (x,y) = (x0,y0) + t*(vx,vy), t -> (-inf; inf)

Vec4f lineParams; fitLine(pointCloud, lineParams, CV_DIST_L2, 0, 0.01, 0.01);

// derive the bounding xs of point cloud

decltype(pointCloud)::iterator minXP, maxXP;

std::tie(minXP, maxXP) = std::minmax_element(pointCloud.begin(), pointCloud.end(), [](const Point2i& p1, const Point2i& p2){ return p1.x < p2.x; });

// derive y coords of fitted line

float m = lineParams[1] / lineParams[0];

int y1 = ((minXP->x - lineParams[2]) * m) + lineParams[3];

int y2 = ((maxXP->x - lineParams[2]) * m) + lineParams[3];

target.push_back(Vec4i(minXP->x, y1, maxXP->x, y2));

return target;

});示范:

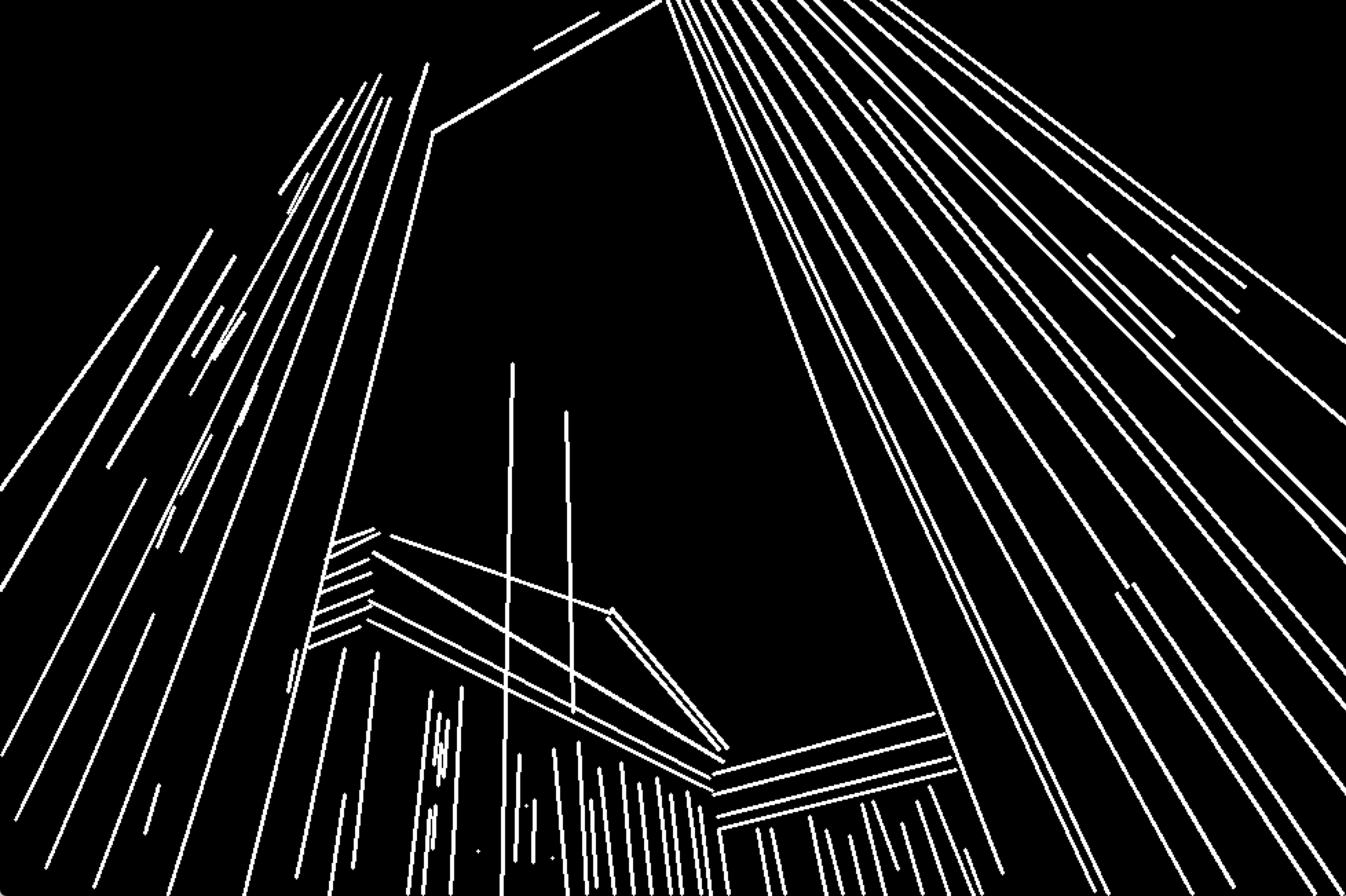

检测到的分隔行(小行过滤掉):

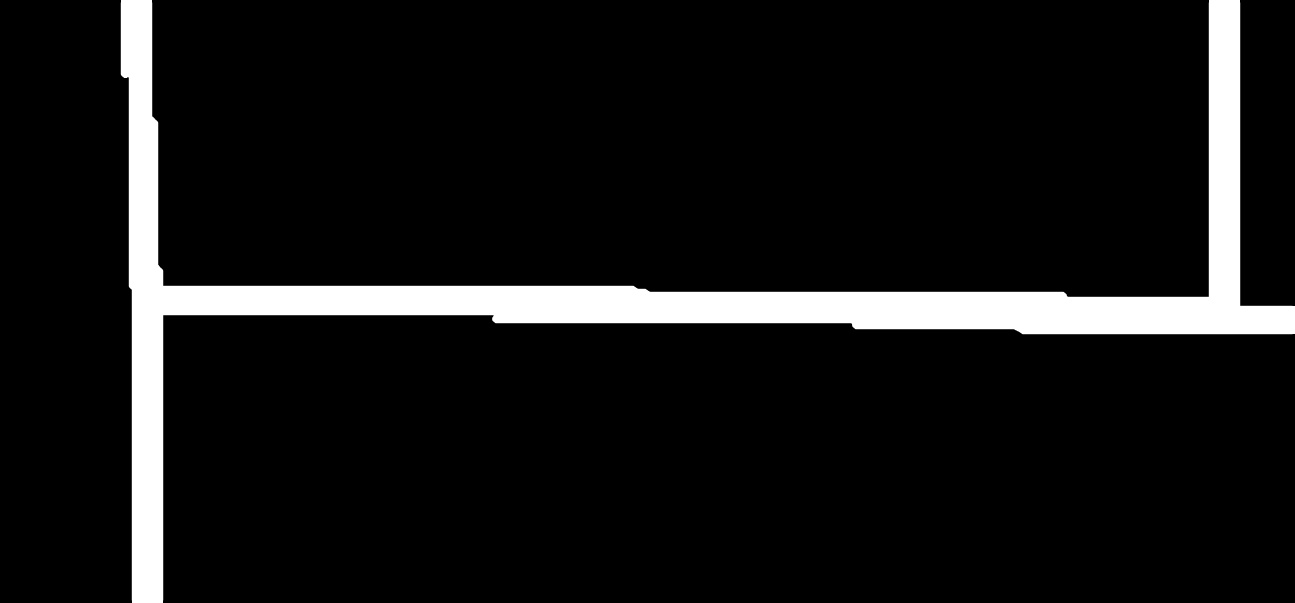

减少:

示范代码:

int main(int argc, const char* argv[]){

if(argc < 2){

std::cout << "img filepath should be present in args" << std::endl;

}

Mat image = imread(argv[1]);

Mat smallerImage; resize(image, smallerImage, cv::Size(), 0.5, 0.5, INTER_CUBIC);

Mat target = smallerImage.clone();

namedWindow("Detected Lines", WINDOW_NORMAL);

namedWindow("Reduced Lines", WINDOW_NORMAL);

Mat detectedLinesImg = Mat::zeros(target.rows, target.cols, CV_8UC3);

Mat reducedLinesImg = detectedLinesImg.clone();

// delect lines in any reasonable way

Mat grayscale; cvtColor(target, grayscale, CV_BGRA2GRAY);

Ptr<LineSegmentDetector> detector = createLineSegmentDetector(LSD_REFINE_NONE);

std::vector<Vec4i> lines; detector->detect(grayscale, lines);

// remove small lines

std::vector<Vec4i> linesWithoutSmall;

std::copy_if (lines.begin(), lines.end(), std::back_inserter(linesWithoutSmall), [](Vec4f line){

float length = sqrtf((line[2] - line[0]) * (line[2] - line[0])

+ (line[3] - line[1]) * (line[3] - line[1]));

return length > 30;

});

std::cout << "Detected: " << linesWithoutSmall.size() << std::endl;

// partition via our partitioning function

std::vector<int> labels;

int equilavenceClassesCount = cv::partition(linesWithoutSmall, labels, [](const Vec4i l1, const Vec4i l2){

return extendedBoundingRectangleLineEquivalence(

l1, l2,

// line extension length - as fraction of original line width

0.2,

// maximum allowed angle difference for lines to be considered in same equivalence class

2.0,

// thickness of bounding rectangle around each line

10);

});

std::cout << "Equivalence classes: " << equilavenceClassesCount << std::endl;

// grab a random colour for each equivalence class

RNG rng(215526);

std::vector<Scalar> colors(equilavenceClassesCount);

for (int i = 0; i < equilavenceClassesCount; i++){

colors[i] = Scalar(rng.uniform(30,255), rng.uniform(30, 255), rng.uniform(30, 255));;

}

// draw original detected lines

for (int i = 0; i < linesWithoutSmall.size(); i++){

Vec4i& detectedLine = linesWithoutSmall[i];

line(detectedLinesImg,

cv::Point(detectedLine[0], detectedLine[1]),

cv::Point(detectedLine[2], detectedLine[3]), colors[labels[i]], 2);

}

// build point clouds out of each equivalence classes

std::vector<std::vector<Point2i>> pointClouds(equilavenceClassesCount);

for (int i = 0; i < linesWithoutSmall.size(); i++){

Vec4i& detectedLine = linesWithoutSmall[i];

pointClouds[labels[i]].push_back(Point2i(detectedLine[0], detectedLine[1]));

pointClouds[labels[i]].push_back(Point2i(detectedLine[2], detectedLine[3]));

}

// fit line to each equivalence class point cloud

std::vector<Vec4i> reducedLines = std::accumulate(pointClouds.begin(), pointClouds.end(), std::vector<Vec4i>{}, [](std::vector<Vec4i> target, const std::vector<Point2i>& _pointCloud){

std::vector<Point2i> pointCloud = _pointCloud;

//lineParams: [vx,vy, x0,y0]: (normalized vector, point on our contour)

// (x,y) = (x0,y0) + t*(vx,vy), t -> (-inf; inf)

Vec4f lineParams; fitLine(pointCloud, lineParams, CV_DIST_L2, 0, 0.01, 0.01);

// derive the bounding xs of point cloud

decltype(pointCloud)::iterator minXP, maxXP;

std::tie(minXP, maxXP) = std::minmax_element(pointCloud.begin(), pointCloud.end(), [](const Point2i& p1, const Point2i& p2){ return p1.x < p2.x; });

// derive y coords of fitted line

float m = lineParams[1] / lineParams[0];

int y1 = ((minXP->x - lineParams[2]) * m) + lineParams[3];

int y2 = ((maxXP->x - lineParams[2]) * m) + lineParams[3];

target.push_back(Vec4i(minXP->x, y1, maxXP->x, y2));

return target;

});

for(Vec4i reduced: reducedLines){

line(reducedLinesImg, Point(reduced[0], reduced[1]), Point(reduced[2], reduced[3]), Scalar(255, 255, 255), 2);

}

imshow("Detected Lines", detectedLinesImg);

imshow("Reduced Lines", reducedLinesImg);

waitKey();

return 0;

}https://stackoverflow.com/questions/30746327

复制相似问题