TDSQL MySQL 版集中式数据库(以下简称为 TDSQL)目前已经支持同城双中心双活能力,主要特性如下:

同城双中心部署

双中心可写:如果您的服务器部署在两中心不同子网中,可分别从两中心各自的服务器连接数据库写入数据。

故障自动转移/恢复

双中心唯一访问 IP

然而,仅是数据库同城双中心双活并不能实现业务系统级的容灾;事实上,单一系统/模块切换到同城灾备中心容易,但企业级系统业务内部复杂的关联、配置都是双中心面临的难题。

因此,构建双活业务系统,需要业务在系统的设计、使用、管理、系统升级过程中时刻都以双中心为基础,双中心实时使用,配置互通为基础,这样才能做到故障后,业务不修改或较少修改,即可快速恢复运行。这也是,腾讯云数据库 TDSQL 同城双中心双活设计的目标,让两个中心的业务系统都能通过本地网络,完全正常读写数据库系统,且能够保证数据库强一致性。

设计标准

腾讯云数据库 TDSQL 双活的设计标准参考《GB/T 20988-2007 信息安全技术 信息系统灾难恢复规范》,由于是数据库单一模块:

RTO ≤ 60秒

RPO ≤ 5秒

故障切换时间 ≤ 5秒

故障检测时间 ≤ 30秒

这意味着,含故障检测时间从故障发生到完全切换完成约需40秒。

风险提示:真实环境测试下,需确保业务系统具有自动重连数据库机制;然而业务系统往往存在多个模块,每个模块可能与多个数据源相关,因此越是复杂系统恢复时间越长。

支持情况

已支持情况

实例版本:

标准版:一主一从(双节点)/一主二从(三节点)

金融定制版:一主一从(双节点)/一主二从(三节点)

网络要求:仅 VPC 网络

已支持地域:

北京(北京一区、北京三区)

上海金融(金融一区、金融二区)

深圳金融(金融一区、金融二区)

价格说明

购买与使用

主、从可用区相同时,即单可用区部署。

主、从可用区不同时,即同城双中心部署。

注意:

主可用区即您主服务器所在区域,数据库应尽量分配在于主服务器相同的 VPC 子网内,减少访问延迟。从可用区,数据库从节点所在可用区;如果是一主二从3节点,主可用区将部署2个节点;如果是一主一从2节点,主可用区将部署1个节点。

金融云围笼方案如需支持同城双中心策略,可能需要先建设同城双中心围笼方案,详情请咨询您对应商务、架构师。

查看实例可用区详情

主从切换

如果您要将主节点从某一可用区切换到另一可用区,您可以直接单击主从切换即可。主从切换是高危操作,需求验证登录账号的 IP 地址;切换过程可能导致数据库连接闪断(≤1s),请保证业务有数据库重连机制;频繁切换将可能业务系统异常甚至数据异常。

技术原理简介

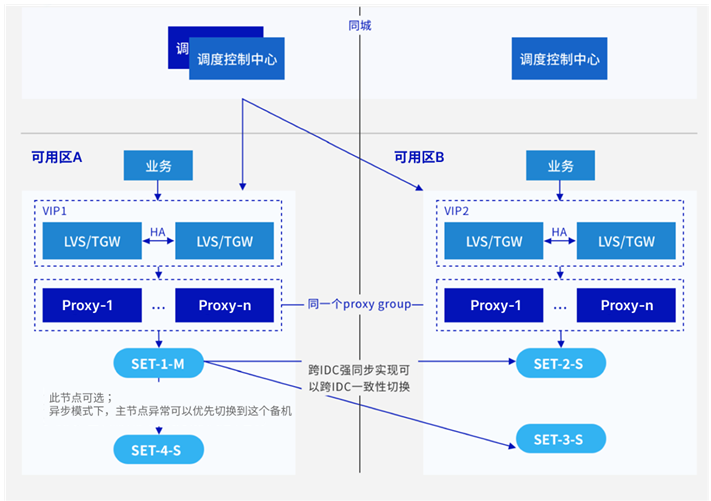

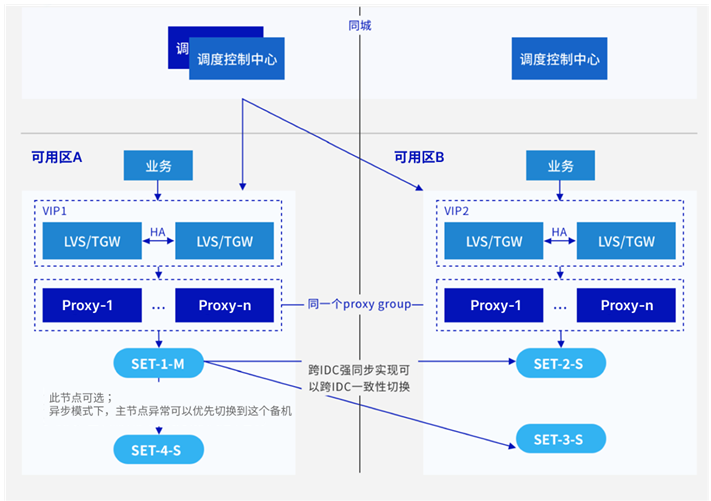

基于 TDSQL 高可用的主从架构和 VPC 可用区虚拟 IP 漂移特性的有机整合,实现了双中心同时读写,架构特点如下:

TDSQL 每一个 DB 节点前端,混合部署 Proxy 模块;Proxy 模块负责将数据请求路由到对应的 DB 节点。

在 Proxy 模块前部署跨地域 VPC 网关,并支持虚拟 IP 漂移功能。

若 Master 与 Proxy 故障,集群自动选举最优 slave 提升为新 Master,系统通知 VPC 修改虚 IP 与物理 IP 关联关系,业务仅感知部分写连接中断。

若 Master 故障,但 Proxy 正常,集群自动选举最优 slave 提升为新 Master,Proxy 将阻塞请求,直到主从切换完成;此时,业务仅感知到部分请求超时。

若 Slave 故障(无论 Proxy 的是否故障),读写分离情况下,根据预先配置的只读账号只读策略(有3种)执行。

若 A 可用区完全故障,VPC、数据库在 B 可用区仍然存活,此时,slave2 节点自动提升为 Master 后,根据强同步策略调整该节点读写策略,VPC 网络 IP 漂移到 B 可用区;此时集群将重试恢复 A 可用区节点,如果超过30分钟无法恢复,将自动在 B 节点重建至少1个 Slave 节点。由于有 IP 漂移策略,业务不需要修改数据库配置。

假设 B 机房完全故障,TDSQL 集群相当于故障了 Slave 节点,处理方法与第3.相同。

常见问题

相对于同单中心,同城双中心是否会导致性能下降?

在基于强同步复制方案下,由于跨中心延迟会略大于同机房内设备,因此 SQL 响应会有理论下降5%左右。

是否存在 Master 节点从主可用区切换到从可用区?

会的,如果不影响您的业务使用,可不用管它。如果您担心影响,可在业务低谷期,通过控制台主从切换功能,切换回去。

如何知道数据库集群做了主从切换?

如果一部分的读写请求读写到从可用区,因为网络延迟原因导致性能下降,但又不想放弃同城双中心这个特性怎么办?

如果期望从单中心更换为同城双中心架构,应该如何操作?

首先确认您所在区域是否支持同城双中心方案,目前已开放北京、上海金融、深圳金融三个地域;其次,您需要提交工单,说明需要调整的账号信息、实例 ID、计划哪两个可用区、以及建议运维操作时间;最后,腾讯云工作人员会进行审核(已支持双可用区的),审核通过后即可按需操作,否则将驳回该需求。