功能说明

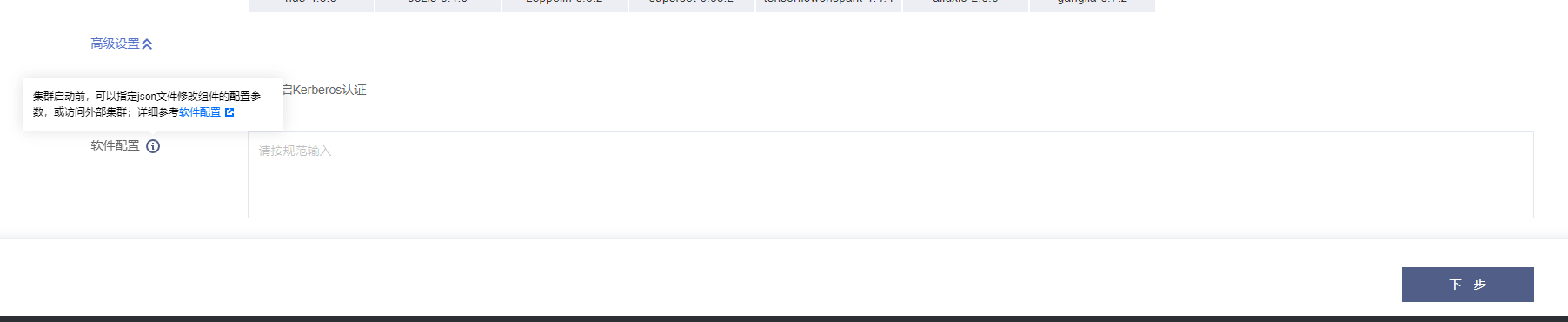

软件配置支持您创建集群时自定义 hdfs、yarn、hive 等组件的配置。

自定义软件配置

Hadoop、Hive 等软件含有大量配置,通过软件配置功能您可以在新建集群的过程中自主配置组件参数。配置过程需要您按要求提供相应的 json 文件,文件可以由您自定义,也可以将存量集群的软件配置参数导出,然后快速新建一个集群。导出软件配置参数,详情请参见 导出软件配置。

json 文件示例及说明:

[{"serviceName": "HDFS","classification": "hdfs-site.xml","serviceVersion": "2.8.4","properties": {"dfs.blocksize": "67108864","dfs.client.slow.io.warning.threshold.ms": "900000","output.replace-datanode-on-failure": "false"}},{"serviceName": "YARN","classification": "yarn-site.xml","serviceVersion": "2.8.4","properties": {"yarn.app.mapreduce.am.staging-dir": "/emr/hadoop-yarn/staging","yarn.log-aggregation.retain-check-interval-seconds": "604800","yarn.scheduler.minimum-allocation-vcores": "1"}},{"serviceName": "YARN","classification": "capacity-scheduler.xml","serviceVersion": "2.8.4","properties": {"content": "<?xml version=\\"1.0\\" encoding=\\"UTF-8\\"?>\\n<?xml-stylesheet type=\\"text/xsl\\" href=\\"configuration.xsl\\"?>\\n<configuration><property>\\n <name>yarn.scheduler.capacity.maximum-am-resource-percent</name>\\n <value>0.8</value>\\n</property>\\n<property>\\n <name>yarn.scheduler.capacity.maximum-applications</name>\\n <value>1000</value>\\n</property>\\n<property>\\n <name>yarn.scheduler.capacity.root.default.capacity</name>\\n <value>100</value>\\n</property>\\n<property>\\n <name>yarn.scheduler.capacity.root.default.maximum-capacity</name>\\n <value>100</value>\\n</property>\\n<property>\\n <name>yarn.scheduler.capacity.root.default.user-limit-factor</name>\\n <value>1</value>\\n</property>\\n<property>\\n <name>yarn.scheduler.capacity.root.queues</name>\\n <value>default</value>\\n</property>\\n</configuration>"}}]

配置参数说明:

serviceName 组件名,必须大写。

classification 文件名,必须使用全称,包含后缀。

serviceVersion 版本名,为组件版本,该版本必须与 EMR 产品版本中对应的组件版本一致。

properties 中填写需要自行配置的参数。

如需修改 capacity-scheduler.xml、fair-scheduler.xml 中配置参数,properties 中的属性 key 需指定为 content,value 为整个文件的内容。

访问外部集群

配置外部集群 HDFS 的访问地址信息后,可以读取外部集群的数据。

购买时配置

假设条件

假设需要访问外部集群的 nameservice 为 HDFS8088,其访问方式为:

<property><name>dfs.ha.namenodes.HDFS8088</name><value>nn1,nn2</value></property><property><name>dfs.namenode.http-address.HDFS8088.nn1</name><value>172.21.16.11:4008</value></property><property><name>dfs.namenode.https-address.HDFS8088.nn1</name><value>172.21.16.11:4009</value></property><property><name>dfs.namenode.rpc-address.HDFS8088.nn1</name><value>172.21.16.11:4007</value></property><property><name>dfs.namenode.http-address.HDFS8088.nn2</name><value>172.21.16.40:4008</value></property><property><name>dfs.namenode.https-address.HDFS8088.nn2</name><value>172.21.16.40:4009</value></property><property><name>dfs.namenode.rpc-address.HDFS8088.nn2</name><value>172.21.16.40:4007</value></property>

json 文件及说明:

以假设条件为例,框内应该填入 json 文件内容(json 内容要求同自定义软件配置)。

[{"serviceName": "HDFS","classification": "hdfs-site.xml","serviceVersion": "2.7.3","properties": {"newNameServiceName": "newEmrCluster","dfs.ha.namenodes.HDFS8088": "nn1,nn2","dfs.namenode.http-address.HDFS8088.nn1": "172.21.16.11:4008","dfs.namenode.https-address.HDFS8088.nn1": "172.21.16.11:4009","dfs.namenode.rpc-address.HDFS8088.nn1": "172.21.16.11:4007","dfs.namenode.http-address.HDFS8088.nn2": "172.21.16.40:4008","dfs.namenode.https-address.HDFS8088.nn2": "172.21.16.40:4009","dfs.namenode.rpc-address.HDFS8088.nn2": "172.21.16.40:4007"}}]

配置参数说明:

serviceName 组件名,必须为“HDFS”。

classification 文件名,必须为“hdfs-site.xml”。

serviceVersion 版本名,为组件版本,该版本必须与 EMR 产品版本中对应的组件版本一致。

properties 中填写的内容与假设条件一致。

newNameServiceName(选填)表示当前新建集群 nameservice。如为空,则由系统生产;如非空,只能由字符串 + 数字 + 中划线组成。

注意

1. 导入支持范围: 地址、路径、用户名、密码类配置导入。

2. 访问的外部集群只支持高可用集群。

3. 访问的外部集群只支持未开启 kerberos 的集群。

购买后配置

假设条件如下:

假设本集群 nameservice 为 HDFS80238(如果是非高可用集群,一般是 masterIp:rpcport,例如172.21.0.11:4007)。

需要访问外部集群的 nameservice 为 HDFS8088,其访问方式为:

<property><name>dfs.ha.namenodes.HDFS8088</name><value>nn1,nn2</value></property><property><name>dfs.namenode.http-address.HDFS8088.nn1</name><value>172.21.16.11:4008</value></property><property><name>dfs.namenode.https-address.HDFS8088.nn1</name><value>172.21.16.11:4009</value></property><property><name>dfs.namenode.rpc-address.HDFS8088.nn1</name><value>172.21.16.11:4007</value></property><property><name>dfs.namenode.http-address.HDFS8088.nn2</name><value>172.21.16.40:4008</value></property><property><name>dfs.namenode.https-address.HDFS8088.nn2</name><value>172.21.16.40:4009</value></property><property><name>dfs.namenode.rpc-address.HDFS8088.nn2</name><value>172.21.16.40:4007</value><property>

1. 进入 配置下发 页面,选择 hdfs 组件的

hdfs-site.xml文件。2. 修改配置项 dfs.nameservices 为

HDFS80238,HDFS8088。3. 增加配置项及值

配置项 | 配置值 |

dfs.ha.namenodes.HDFS8088 | nn1,nn2 |

fs.namenode.http-address.HDFS8088.nn1 | 172.21.16.11:4008 |

dfs.namenode.https-address.HDFS8088.nn1 | 172.21.16.11:4009 |

dfs.namenode.rpc-address.HDFS8088.nn1 | 172.21.16.11:4007 |

fs.namenode.http-address.HDFS8088.nn2 | 172.21.16.40:4008 |

dfs.namenode.https-address.HDFS8088.nn2 | 172.21.16.40:4009 |

dfs.namenode.rpc-address.HDFS8088.nn2 | 172.21.16.40:4007 |

dfs.client.failover.proxy.provider.HDFS8088 | org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider |

dfs.internal.nameservices | HDFS80238 |

注意

dfs.internal.nameservice 需要新增,否则扩容集群后可能导致 datanode 上报异常而被 namenode 标记为 dead。

4. 下发配置,使用 配置下发 功能下发配置。