开启元数据加速能力后,COS 会为元数据加速桶生成一个挂载点,您可以通过下载 HDFS 客户端,在客户端中输入桶信息挂载元数据加速桶。本文将详细介绍如何在计算集群中挂载开启元数据加速的存储桶,以实现使用 HDFS 访问。

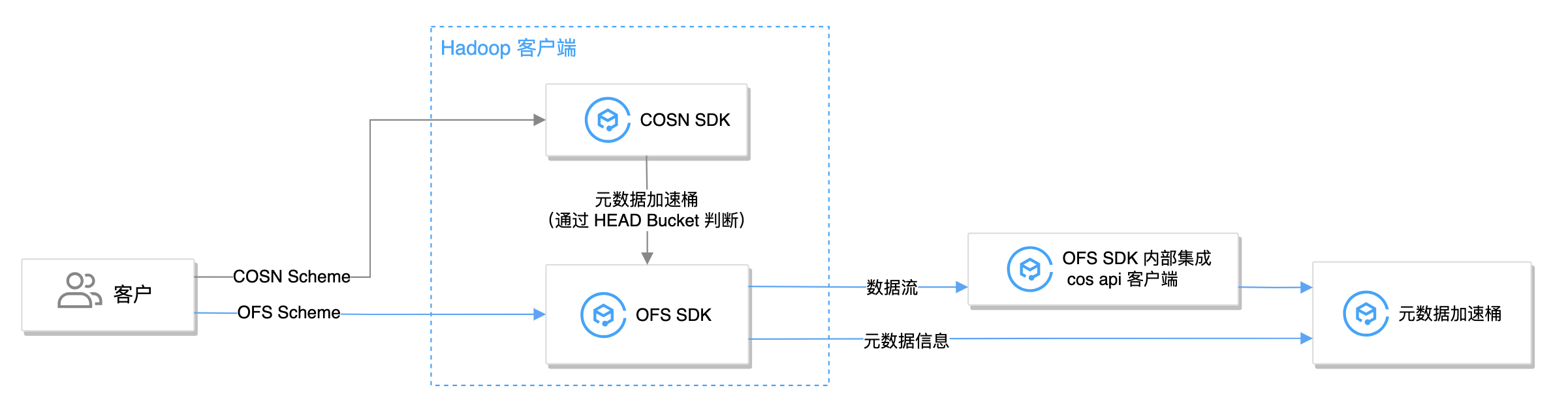

客户端介绍

使用 HDFS 访问 COS 共需要两个客户端:Hadoop 客户端(用于实现 HDFS 语义)及 cos api 客户端(用于访问 COS 桶)。元数据加速桶兼容 Hadoop-COS(使用 COSN 作为 scheme,也称为 COSN 客户端)及 chdfs-hadoop-plugin(使用 OFS 作为 scheme,也称为 OFS 客户端) 两种 Hadoop 客户端。

若使用 COSN 客户端,会先请求 HEAD Bucket 判断存储桶类型为元数据加速桶,并将后续请求转发给 OFS 客户端进行处理。因此,若非兼容普通 COS 桶场景,推荐使用 OFS 客户端以获得更加原生的体验。

说明:

环境依赖

各客户端依赖的环境说明如下:

使用 OFS 客户端仅需下载 chdfs hadoop jar 包:

下载地址:chdfs-hadoop-plugin

版本要求:2.7版本及以上

说明:

OFS 客户端自动拉取 cos api 客户端,无需安装。挂载成功后可在

fs.ofs.tmp.cache.dir 配置的目录下查看对应 JAR 包及版本。使用 COSN 客户端需下载 Hadoop-COS(COSN) jar 包、chdfs-hadoop-plugin(OFS) jar 包、cos_api-bundle jar 包:

Hadoop-COS(COSN)

下载地址:COSN(hadoop-cos)

版本要求:8.1.5版本及以上

chdfs-hadoop-plugin(OFS)

下载地址:chdfs-hadoop-plugin

版本要求:2.7版本及以上

cos_api-bundle

下载地址:cos_api-bundle

版本要求:和 COSN 版本相对应,查看 COSN github releases 进行确认。

挂载至腾讯云 EMR 环境

前提条件

已创建元数据加速桶,开启 HDFS 协议,并已绑定计算集群所在的 VPC 网络地址。具体操作请参见 创建元数据加速桶并开启 HDFS 协议。

已创建同地域 EMR 集群,保证网络互通。具体操作请参见 EMR 新手指引。

EMR temrfs 客户端已集成 COSN 及 api bundle,需要额外下载 CHDFS 客户端,下载地址:chdfs-hadoop-plugin。

操作步骤

1. 登录创建好的 EMR 环境,并在该机器上执行以下命令,检查 EMR 环境下 JAR 包版本是否符合 环境依赖 要求。

find / -name "chdfs*"find / -name "temrfs_hadoop*"

查看搜索结果,确保环境中已有

temrfs_hadoop_plugin、chdfs_hadoop_plugin 两个 jar 包,并且chdfs_hadoop_plugin 版本在2.7及以上。

2. (可选)若您需要更新

chdfs_hadoop_plugin版本,请执行以下操作。2.1 下载更新 jar 包的脚本文件,下载地址如下:

2.2 把以上两个脚本文件放到服务器 /root 目录下,为 update_cos_jar.sh 添加执行权限,执行以下命令。

注意:

URL 参数替换为对应地域的存储桶,例如广州地域,则替换为 https://hadoop-jar-guangzhou-1259378398.cos.ap-guangzhou.myqcloud.com/hadoop_plugin_network/2.7。

sh update_cos_jar.sh https://hadoop-jar-beijing-1259378398.cos.ap-beijing.myqcloud.com/hadoop_plugin_network/2.7

2.3 在每一台 EMR 节点上依次执行以上步骤,直到机器上的 jar 包都替换完成。

3. (可选)若您需要更新 hadoop-cos 包或者 cos_api-bundle 版本,请参见 EMR 更新说明文档。

4. 在 EMR 控制台 配置 core-site.xml,新增配置项

fs.cosn.bucket.region 。fs.cosn.trsf.fs.ofs.bucket.region 该参数用于指定存储桶所在的 COS 地域,例如:ap-shanghai。注意:

fs.cosn.bucket.region 和 fs.cosn.trsf.fs.ofs.bucket.region 为必填配置,用于指定存储桶所在的 COS 地域,例如 ap-shanghai。获取桶对应地域可参见 COS 地域说明文档。5. 重启 Yarn、Hive、Presto、Impala 等一些常驻服务,将

core-site.xml 同步到所有 Hadoop 节点上。6. 挂载验证。

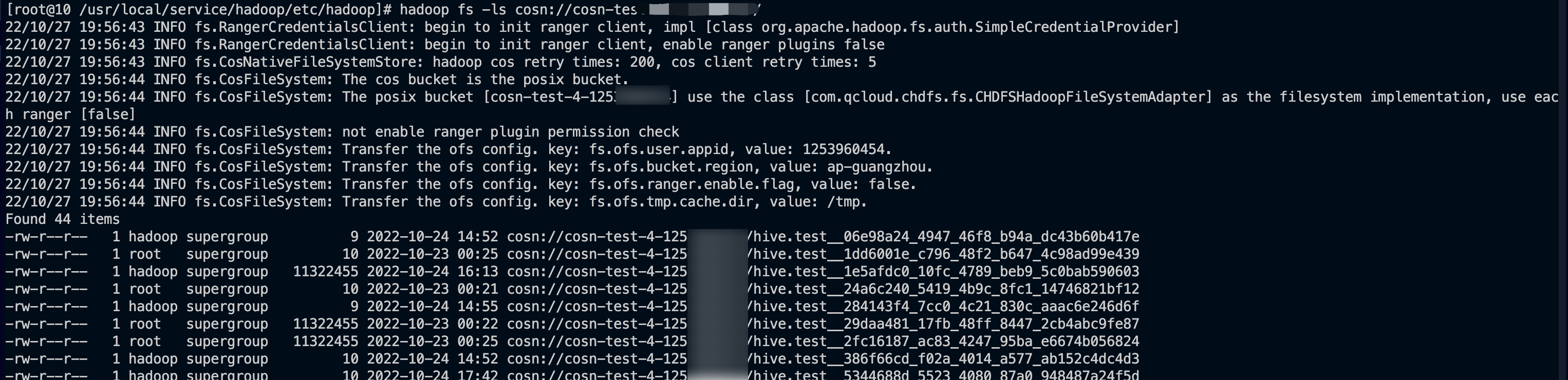

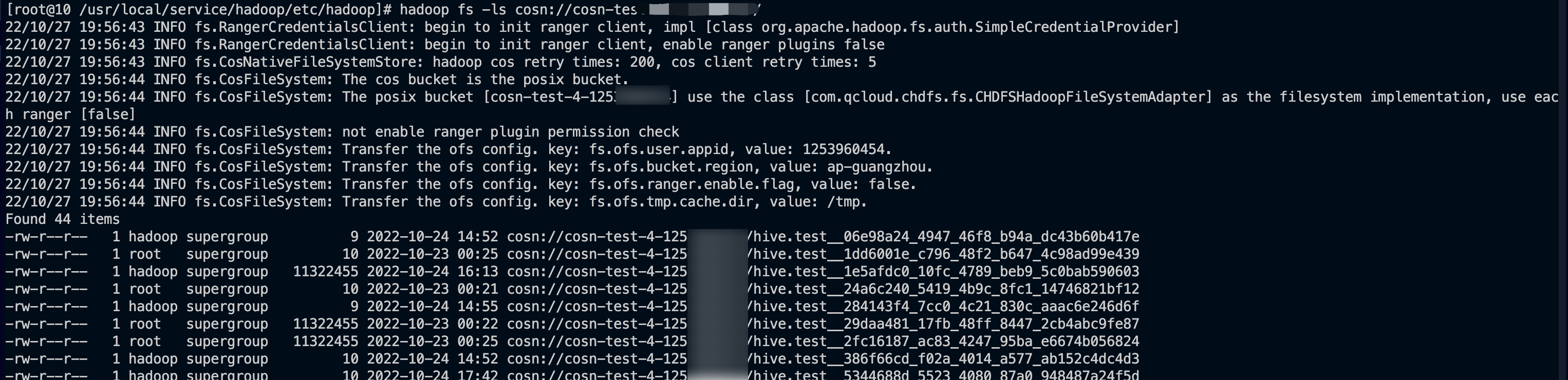

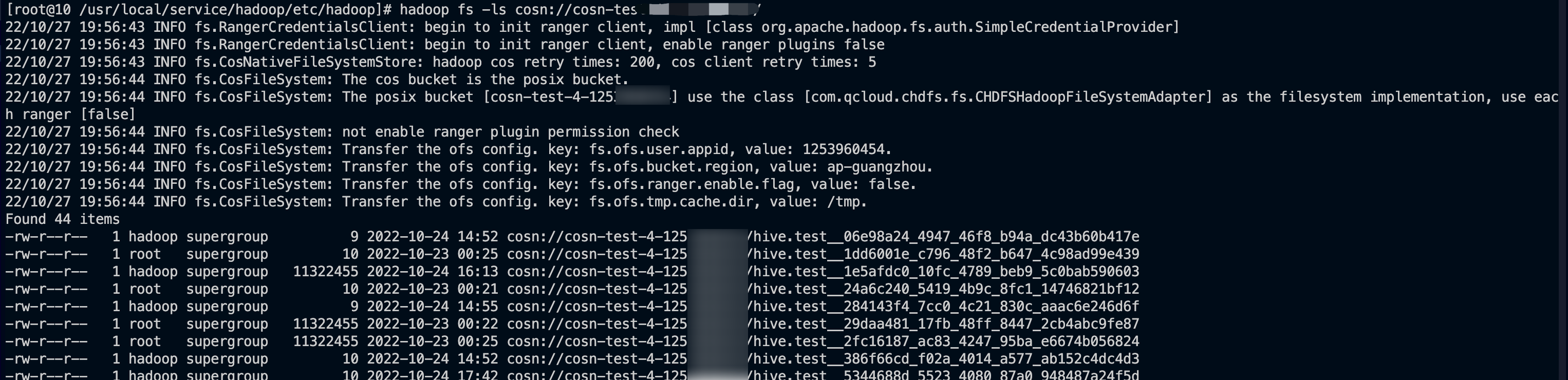

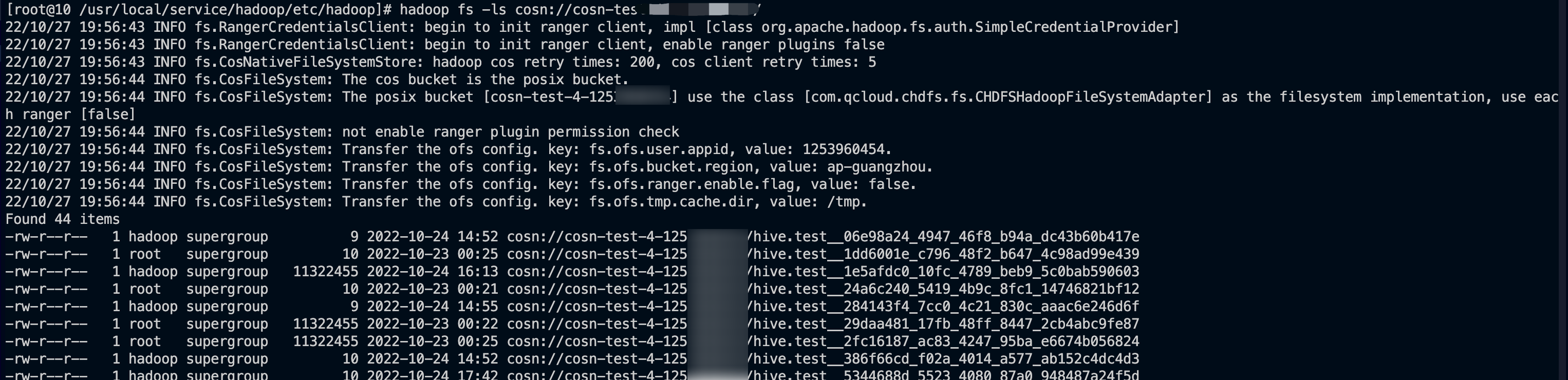

使用 hadoop fs 命令行工具,运行

hadoop fs -ls cosn://${bucketname-appid}/ 命令(bucketname-appid 为挂载地址,即存储桶名称),如果正常列出文件列表,则说明已经成功挂载 COS 存储桶。

挂载至自建 Hadoop/CDH 等环境

前提条件

已创建元数据加速桶,开启 HDFS 协议,并已绑定计算集群所在的 VPC 网络地址。具体操作请参见 创建元数据加速桶并开启 HDFS 协议。

确保计算集群中需要挂载的机器或者容器内已安装 Java 1.8 或 Java 11。

已下载 环境依赖 中的符合版本要求的包。

操作步骤

1. 将环境依赖中的安装包正确放置到 Hadoop 集群中每台服务器的

classpath 路径下。说明:

路径参考:

/usr/local/service/hadoop/share/hadoop/common/lib/,根据实际情况放置,不同组件可能放置的位置不同。2. 修改

hadoop-env.sh 文件。进入 $HADOOP_HOME/etc/hadoop 目录,编辑 hadoop-env.sh 文件,增加以下内容,将 cosn 相关 jar 包加入 Hadoop 环境变量。for f in $HADOOP_HOME/share/hadoop/tools/lib/*.jar; doif [ -n "$HADOOP_CLASSPATH" ]; thenHADOOP_CLASSPATH="$HADOOP_CLASSPATH:$f"elseHADOOP_CLASSPATH="$f"fidoneexport HADOOP_CLASSPATH

3. 在计算集群配置 core-site.xml,新增以下必填配置。

OFS客户端必填配置项如下:

配置项 | 配置项内容 | 说明 |

fs.AbstractFileSystem.ofs.impl | com.qcloud.chdfs.fs.CHDFSDelegateFSAdapter | 元数据桶访问实现类 |

fs.ofs.impl | com.qcloud.chdfs.fs.CHDFSHadoopFileSystemAdapter | 元数据桶访问实现类 |

fs.ofs.tmp.cache.dir | 格式如:/data/emr/hdfs/tmp/posix-cosn/ | 请设置一个实际存在的本地目录,运行过程中产生的临时文件会暂时放于此处。同时建议配置各节点该目录足够的空间和权限,例如:/data/emr/hdfs/tmp/posix-cosn/ |

fs.ofs.user.appid | 格式如:12500000000 | 必填。用户 appid |

fs.ofs.bucket.region | 格式如:ap-beijing | 必填。用户 bucket 对应 region |

core-site.xml 配置参考示例:<!--ofs 的实现类--><property><name>fs.AbstractFileSystem.ofs.impl</name><value>com.qcloud.chdfs.fs.CHDFSDelegateFSAdapter</value></property><!--ofs 的实现类--><property><name>fs.ofs.impl</name><value>com.qcloud.chdfs.fs.CHDFSHadoopFileSystemAdapter</value></property><!--本地 cache 的临时目录, 对于读写数据, 当内存 cache 不足时会写入本地硬盘, 这个路径若不存在会自动创建--><property><name>fs.ofs.tmp.cache.dir</name><value>/data/chdfs_tmp_cache</value></property><!--用户的 appId, 可登录腾讯云控制台(https://console.cloud.tencent.com/developer)查看--><property><name>fs.ofs.user.appid</name><value>1250000000</value></property><!--用户存储桶的地域信息,格式形如 ap-guangzhou--><property><name>fs.ofs.bucket.region</name><value>ap-guangzhou</value></property>

COSN 配置项:用于发起 HEAD Bucket 请求。必填配置项如下:

注意:

建议用户尽量避免在配置中使用永久密钥,采取配置子账号密钥或者临时密钥的方式有助于提升业务安全性。为子账号授权时请遵循 最小权限指引原则,避免发生预期外的数据泄露。

如果您一定要使用永久密钥,建议对永久密钥的权限范围进行限制,可参考 最小权限指引原则 通过限制永久密钥的可执行操作、资源范围和条件(访问 IP 等),提升使用安全性。

配置项 | 配置项内容 | 说明 |

fs.cosn.userinfo.secretId/secretKey | 格式如 ************************************ | |

fs.cosn.impl | org.apache.hadoop.fs.CosFileSystem | cosn 对 FileSystem 的实现类,固定为 org.apache.hadoop.fs.CosFileSystem。 |

fs.AbstractFileSystem.cosn.impl | org.apache.hadoop.fs.CosN | cosn 对 AbstractFileSystem 的实现类,固定为 org.apache.hadoop.fs.CosN。 |

fs.cosn.bucket.region | 格式如 ap-beijing | |

fs.cosn.tmp.dir | 默认/tmp/hadoop_cos | 请设置一个实际存在的本地目录,运行过程中产生的临时文件会暂时放于此处。同时建议配置各节点该目录足够的空间和权限。 |

OFS 配置项:用于处理转发后的请求。通过在 COSN 配置项中添加

trsf.fs.ofs 以实现配置项的映射。必填配置项如下:配置项 | 配置项内容 | 说明 |

fs.cosn.trsf.fs.AbstractFileSystem.ofs.impl | com.qcloud.chdfs.fs.CHDFSDelegateFSAdapter | 元数据桶访问实现类 |

fs.cosn.trsf.fs.ofs.impl | com.qcloud.chdfs.fs.CHDFSHadoopFileSystemAdapter | 元数据桶访问实现类 |

fs.cosn.trsf.fs.ofs.tmp.cache.dir | 格式如:/data/emr/hdfs/tmp/posix-cosn/ | 请设置一个实际存在的本地目录,运行过程中产生的临时文件会暂时放于此处。同时建议配置各节点该目录足够的空间和权限,例如:/data/emr/hdfs/tmp/posix-cosn/ |

fs.cosn.trsf.fs.ofs.user.appid | 格式如:12500000000 | 必填。用户 appid |

fs.cosn.trsf.fs.ofs.bucket.region | 格式如:ap-beijing | 必填。用户 bucket 对应 region |

core-site.xml 配置参考示例:<!--账户的 API 密钥信息。可登录 [访问管理控制台](https://console.cloud.tencent.com/capi) 查看云 API 密钥。--><!--建议使用子账号密钥或者临时密钥的方式完成配置,提升配置安全性。为子账号授权时请遵循[最小权限指引原则](https://cloud.tencent.com/document/product/436/38618)。--><property><name>fs.cosn.userinfo.secretId/secretKey</name><value>************************************</value></property><!--cosn 的实现类--><property><name>fs.AbstractFileSystem.cosn.impl</name><value>org.apache.hadoop.fs.CosN</value></property><!--cosn 的实现类--><property><name>fs.cosn.impl</name><value>org.apache.hadoop.fs.CosFileSystem</value></property><!--用户存储桶的地域信息,格式形如 ap-guangzhou--><property><name>fs.cosn.bucket.region</name><value>ap-guangzhou</value></property><!--本地临时目录,用于存放运行过程中产生的临时文件--><property><name>fs.cosn.tmp.dir</name><value>/tmp/hadoop_cos</value></property><!--ofs 的实现类--><property><name>fs.cosn.trsf.fs.AbstractFileSystem.ofs.impl</name><value>com.qcloud.chdfs.fs.CHDFSDelegateFSAdapter</value></property><!--ofs 的实现类--><property><name>fs.cosn.trsf.fs.ofs.impl</name><value>com.qcloud.chdfs.fs.CHDFSHadoopFileSystemAdapter</value></property><!--本地 cache 的临时目录, 对于读写数据, 当内存 cache 不足时会写入本地硬盘, 这个路径若不存在会自动创建--><property><name>fs.cosn.trsf.fs.ofs.tmp.cache.dir</name><value>/data/chdfs_tmp_cache</value></property><!--用户的 appId, 可登录腾讯云控制台(https://console.cloud.tencent.com/developer)查看--><property><name>fs.cosn.trsf.fs.ofs.user.appid</name><value>1250000000</value></property><!--用户存储桶的地域信息,格式形如 ap-guangzhou--><property><name>fs.cosn.trsf.fs.ofs.bucket.region</name><value>ap-guangzhou</value></property>

4. 挂载验证。

使用 hadoop fs 命令行工具,运行

hadoop fs -ls cosn://${bucketname-appid}/ 命令(bucketname-appid 为挂载地址,即存储桶名称),如果正常列出文件列表,则说明已经成功挂载 COS 存储桶。

说明: