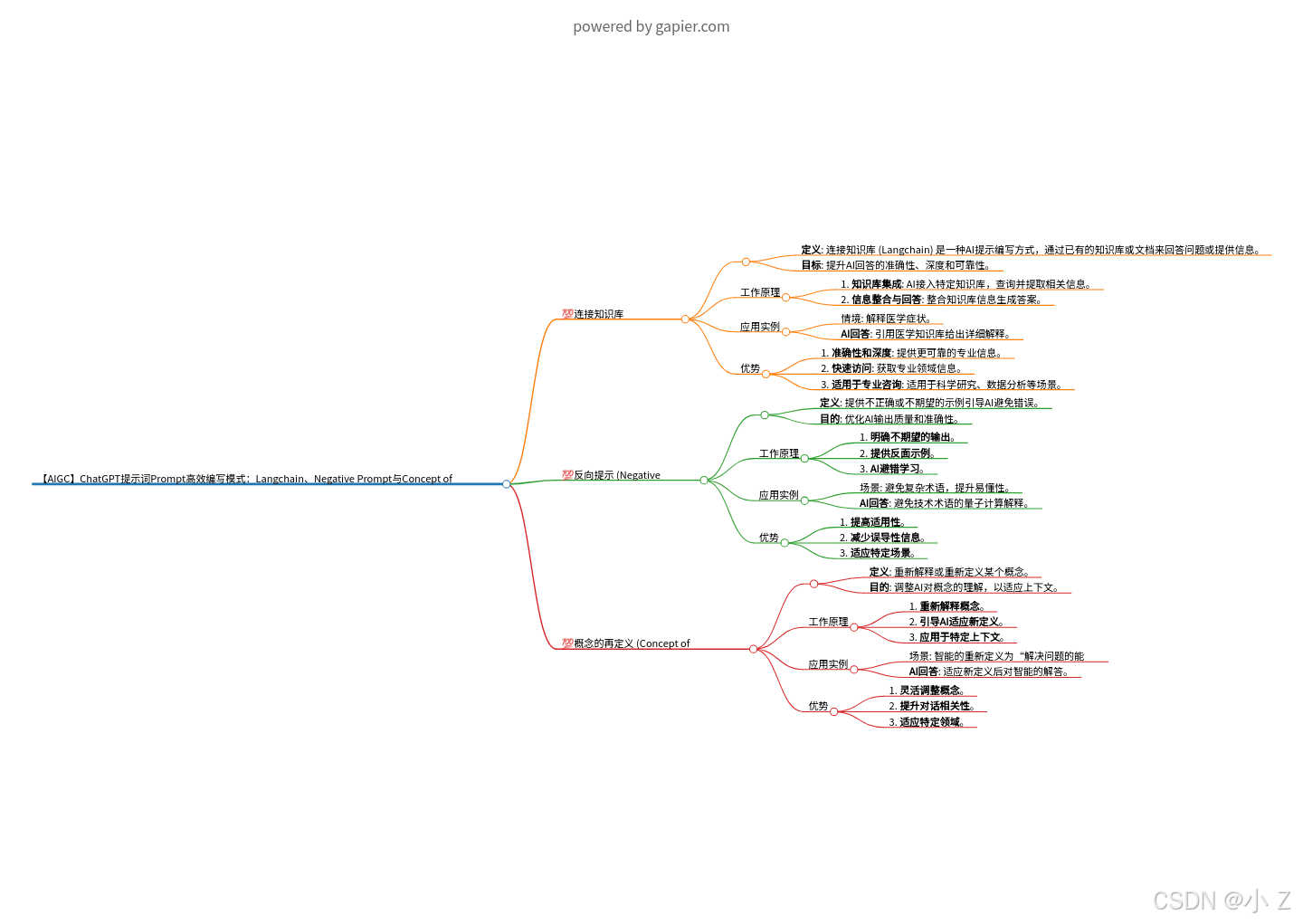

【AIGC】ChatGPT提示词Prompt高效编写模式:Langchain、Negative Prompt与Concept of Redefinition

【AIGC】ChatGPT提示词Prompt高效编写模式:Langchain、Negative Prompt与Concept of Redefinition

CSDN-Z

发布于 2024-10-23 09:03:06

发布于 2024-10-23 09:03:06

💯前言

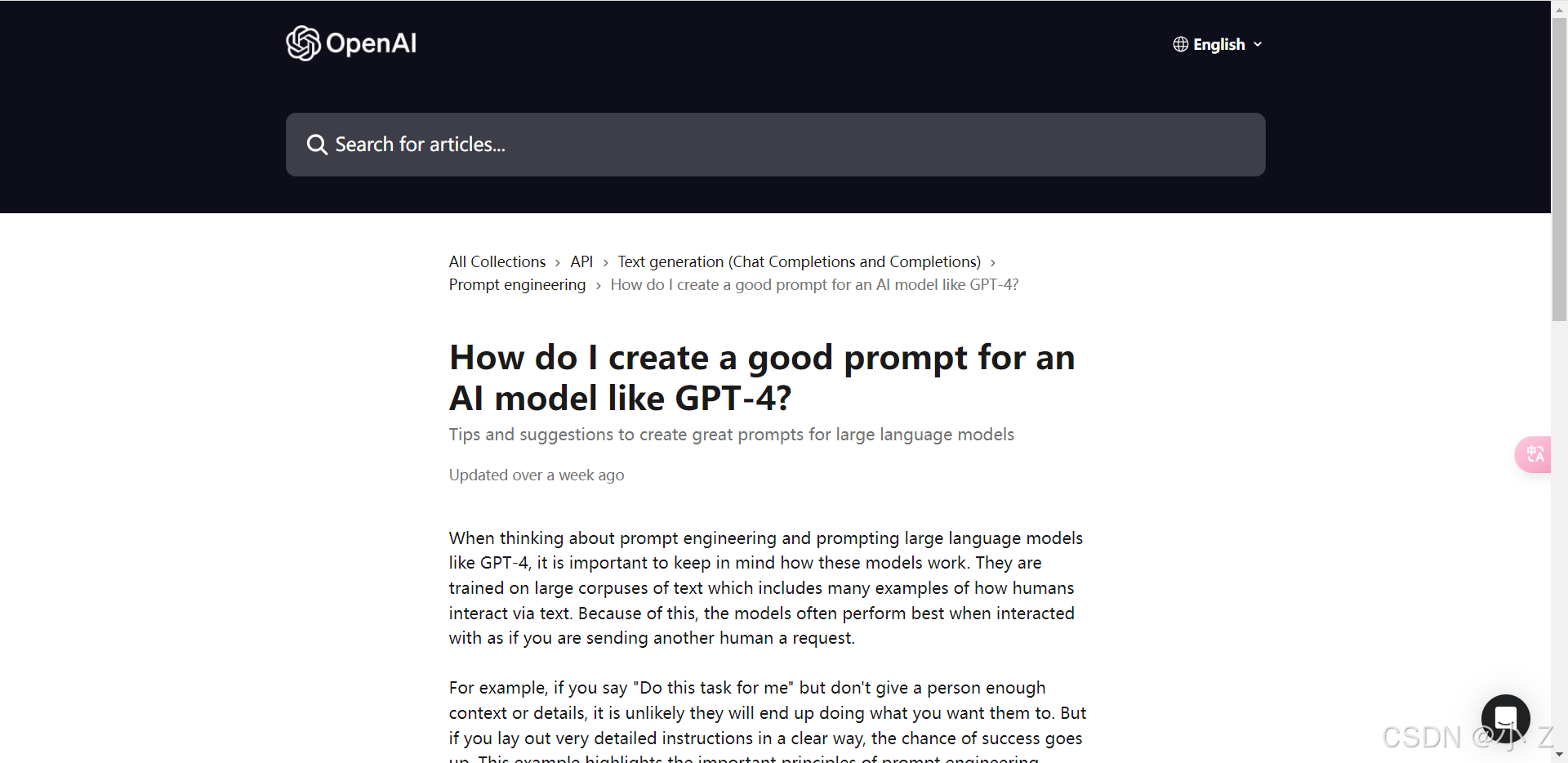

- 在生成式AI的应用中,如何提高模型的生成结果质量和可靠性至关重要。高效的Prompt编写方法不仅能引导AI更准确地理解用户需求,还能有效规避误解和不良输出。在本篇文章中,我们将深入探讨三种高效的Prompt编写模式——链接知识库、反向提示和概念再定义。通过这些方法,读者能够掌握如何利用现有知识库提升AI的回答质量,如何通过反向引导避免不当生成,以及如何通过再定义概念来增强对话的精准性和实用性。这些技术的应用将为AI生成的内容带来更高的可靠性和可控性。 如何为GPT-4编写有效Prompt

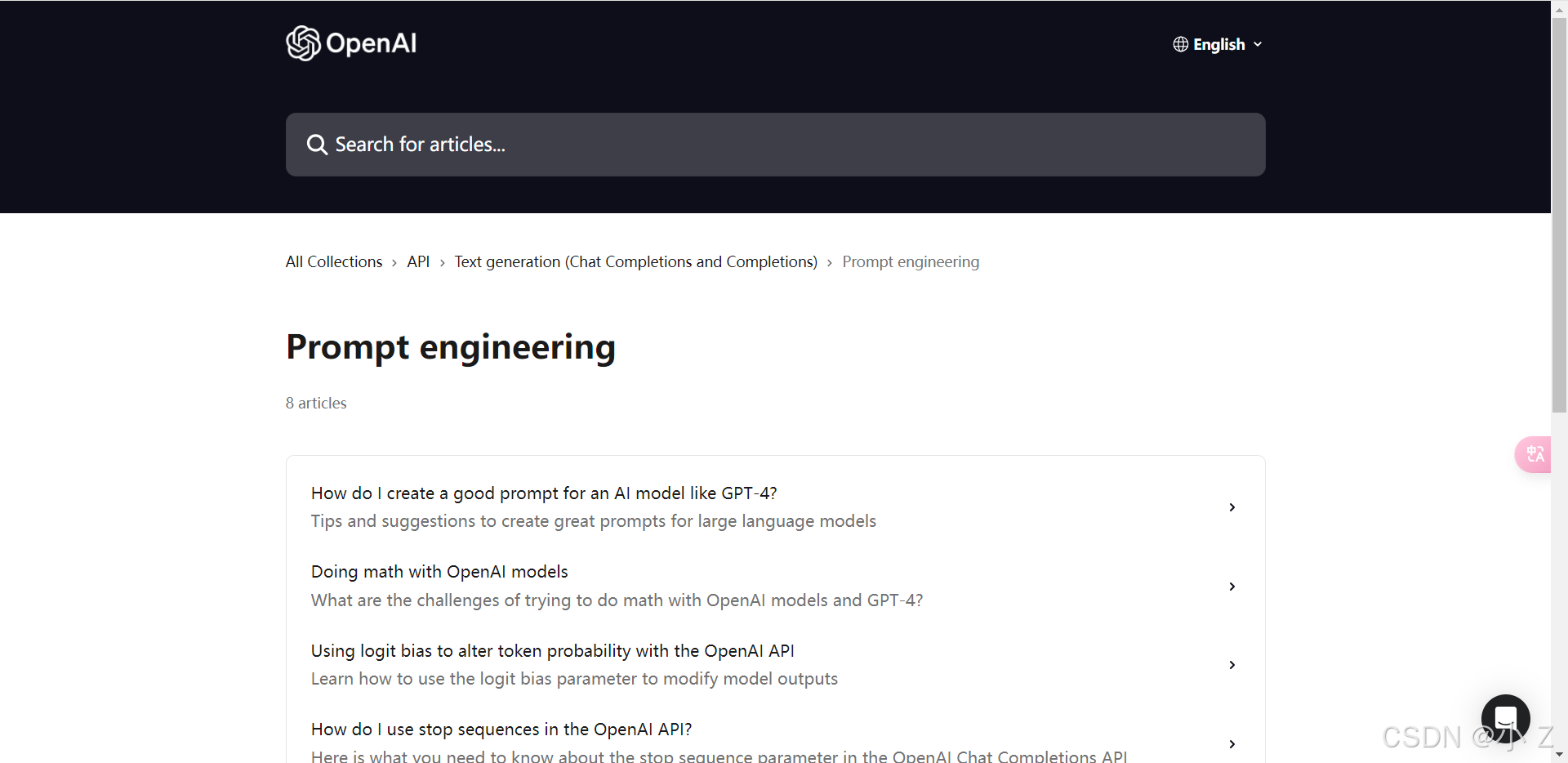

💯连接知识库 (Langchain)

- 定义:

连接知识库 (Langchain)是一种AI提示编写方式,其中AI模型通过已有的知识库或文档来回答问题或提供相关信息。

- 目标:

- 旨在提升AI回答的准确性、深度和可靠性,尤其适用于需要引用特定知识或信息的场景。

如何工作

1. 知识库集成:

- AI模型可以接入特定的知识库或数据源,这些资源可能包括专业文档、书籍、数据库或在线资源。

2. 查询和提取:

- 当用户提出问题时,AI模型会利用连接的知识库进行查询,并提取相关的信息。

3. 信息整合与回答:

- AI模型整合从知识库中获得的信息,并结合自身的处理能力,生成准确而全面的回答。

应用实例

- 情境示例:

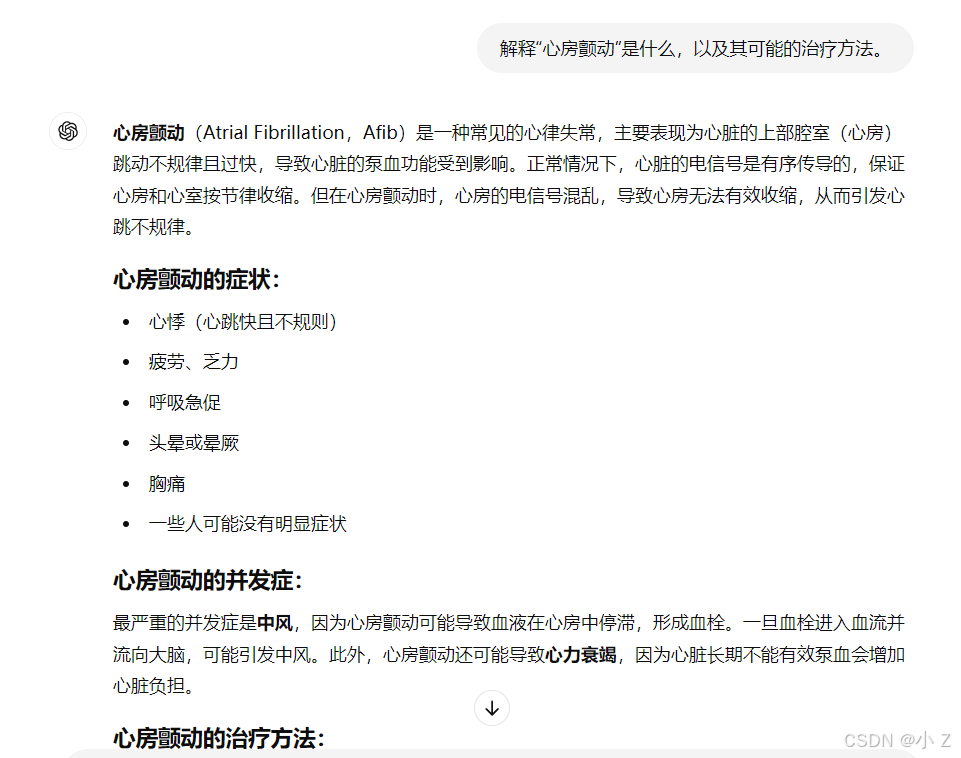

- 假设用户想要了解某个医学症状的详细信息,例如心房颤动的定义和治疗方法。

- Langchain的应用:

- 用户问题: “请解释心房颤动是什么,以及可能的治疗方法。”

- AI模型响应(连接医学知识库): “心房颤动是一种常见的心脏节律障碍,表现为心房快速且不规则的跳动。治疗方法可能包括药物治疗、电复律或外科手术。根据《心脏病学杂志》中的相关数据,推荐的治疗方式因患者的具体情况而有所不同……”

- 总结:

- 在这一过程中,AI模型不仅提供了基础的定义,还引用了专业的医学知识库,从而增强了回答的准确性和深度。

优势

1. 准确性和深度:

- 通过专业知识库的支持,AI模型可以显著提高回答的准确性和深度,特别是在处理复杂或专业领域问题时。

2. 快速访问专业信息:

- 用户可以迅速获取到专业领域的详细且可靠的信息,例如医学、法律或工程等领域的内容。

3. 适用于专业咨询:

- 这种方法特别适合需要专业知识或详细数据支持的咨询场景,例如科学研究、数据分析或行业特定问题的解答。

结论

- Langchain的价值:

- 连接知识库(Langchain)是一种高效的AI提示方法,能够在特定领域内提供准确且深入的答案。通过整合专业的知识库,AI模型为用户提供了更具深度和可靠性的解决方案,从而增强其在专业咨询和数据分析中的应用价值。

💯反向提示 (Negative Prompt)

- 定义:

- 反向提示 (Negative Prompt) 是一种AI提示方法,旨在通过提供不正确或不希望出现的示例来引导AI模型避免某些类型的错误或不期望的行为。

- 目的:

- 这种方法的主要目的是明确指出不希望AI采取的行为,从而优化AI的输出质量和准确性。

如何工作

- 1. 明确不期望的输出:

- 用户可以在提示中清晰地指出哪些类型的回答或行为是不可接受的,以帮助AI模型避免犯类似错误。

- 2. 提供反面示例:

- 向AI提供具体的反面示例,帮助其理解在某些情境下哪些反应方式应当被避免。

- 3. AI的避错学习:

- AI模型根据这些负面提示进行优化,调整自身的行为,以防止在实际应用中重复相似的错误。

应用实例

- 场景示例:

- 用户希望AI在回答问题时避免使用过于技术性的术语,以便让回答更加易懂。

- Negative Prompt的应用:

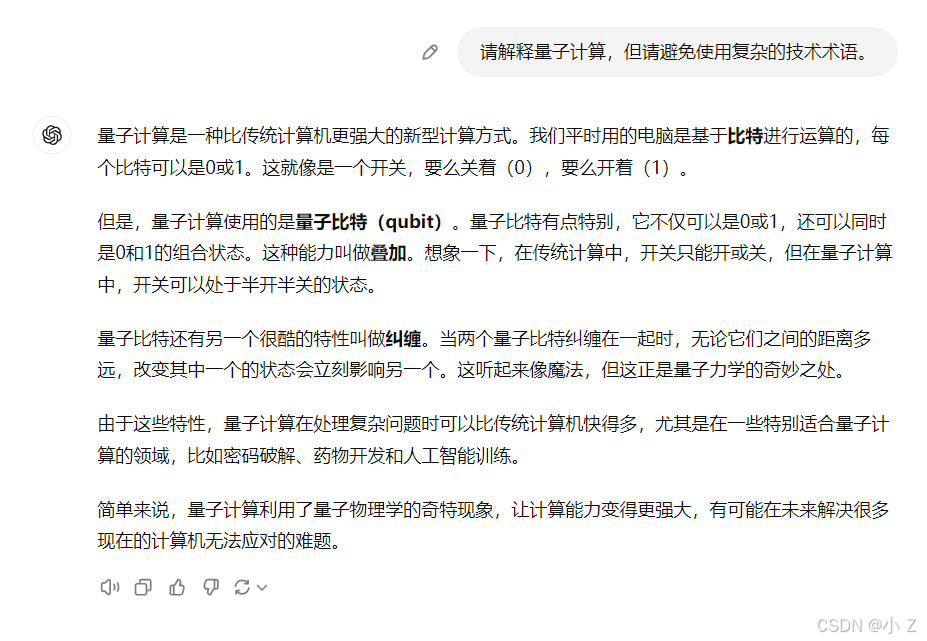

- 用户请求: “请解释量子计算,但请避免使用复杂的技术术语。”

- AI模型回答: “量子计算是一种利用量子位而不是传统比特进行计算的技术,它允许更高效地处理复杂的计算,但在解释中避免了复杂的物理学术语。”

- 结果总结:

- 在这一过程中,AI成功避免了使用深奥的技术术语,直接以通俗易懂的方式回应了用户的需求。

优势

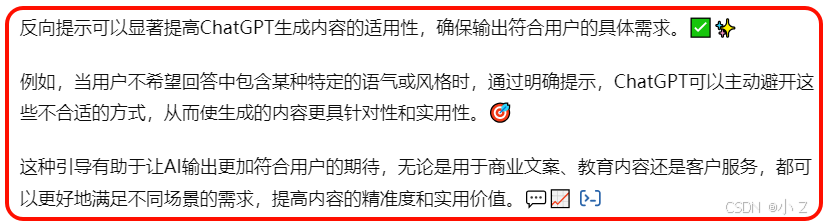

- 1. 提高输出的适用性:

- 通过避免不希望的回答方式,使AI的输出更符合用户的实际需求,从而提升内容的针对性和实用性。

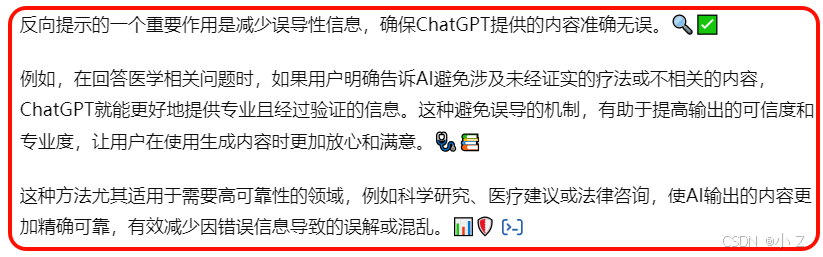

- 2. 减少误导性信息:

- 反向提示可以有效避免提供可能误导用户的错误信息或不相关的内容,从而提高输出的准确性和可信度。

- 3. 适应特定应用场景:

- 特别适用于需要避免特定类型错误的场景,例如教育和咨询服务等,确保在这些情境中AI能够提供更为合适的内容。

结论

- 反向提示 (Negative Prompt) 是一种高效的AI提示方法,特别适合用于提升AI输出的适用性和准确性。

- 通过明确指出不希望出现的行为或回答方式,用户可以引导AI模型更准确地满足其需求,同时避免可能的误解或误导。

- 这种方法对于增强AI在特定应用场景中的实用性和可信度具有重要意义,确保AI能够更好地应对各种复杂需求,提供符合用户期望的回答。

💯概念的再定义 (Concept of Redefinition)

- 定义:

- 概念的再定义 (Concept of Redefinition) 是一种AI提示方法,目的是重新解释或重新定义某个特定的概念,从而调整或改变AI模型对该概念的理解。

- 目的:

- 当原有的概念定义不够准确、过时或不适用于当前特定的上下文时,这种方法可以用来有效地调整AI模型对该概念的理解,以确保输出更符合当前情境的需求。

如何工作

- 1. 重新解释概念:

- 用户提出新的或改进的定义,以替代或修正AI模型原有的概念理解。

- 2. 引导AI适应新定义:

- AI模型根据新的定义调整回答和行为,从而更好地满足用户的具体需求。

- 3. 应用于特定上下文:

- 重新定义的概念通常应用于特定的场景或上下文,以确保输出的准确性和相关性。

应用实例

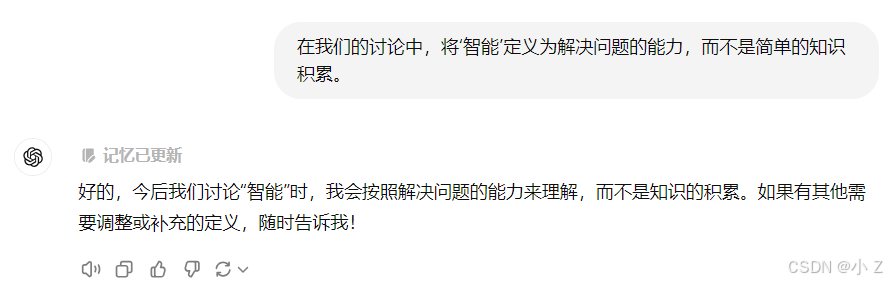

- 比如,用户希望AI在讨论“智能”时,将其重新定义为“解决问题的能力”,而不是传统的“知识积累”。在这种情况下,应用可能是:

- 用户:“在我们的讨论中,将‘智能’定义为解决问题的能力,而不是简单的知识积累。”

- AI模型:“明白了,在这个上下文中,我会将‘智能’理解为解决问题的能力,这意味着……”

- 在整个过程中,AI根据用户的指引调整了对“智能”这一概念的理解,从而更好地适应用户特定的定义需求。

优势

- 1. 灵活调整概念:

- 用户可以根据自己的需求,灵活地调整AI对特定概念的理解,从而使AI的表现更符合期望。

在这里插入图片描述

在这里插入图片描述 - 2. 提升对话的相关性:

- 通过对概念进行准确的定义,可以使AI的回答更贴合用户的实际需求及其上下文环境,确保对话的有效性和关联性。

- 3. 适应特定领域:

- 在涉及到特定领域或专业知识时,重新定义概念能够显著提高AI回答的专业性和准确性。

结论

- 概念的再定义(Concept of Redefinition)是一种强大的AI提示方法,尤其适用于需要根据特定上下文或用户需求进行概念调整的场合。

- 通过这种方法,用户能够确保AI对某一特定场景的理解更加精确,从而提供更加相关和满意的回答,极大地提升了互动的效率和用户的满意度。

💯小结

在生成式AI的应用中,如何提高模型的生成质量和可靠性是一个至关重要的课题。通过本文,详细介绍了三种高效的Prompt编写方法,即连接知识库、反向提示和概念再定义。这些方法不仅可以提升AI回答的准确性和深度,还可以通过反向提示避免不当生成,并且通过重新定义概念来调整AI对特定问题的理解。随着AI在各个领域中的应用不断扩大,这些技巧能够有效地优化AI的输出质量,为用户提供更可靠和精确的回答,尤其是在需要专业知识支撑的场景中,能够显著增强AI的实用性和可信度。

- 展望ChatGPT的未来,随着生成式AI的不断发展,高效的Prompt编写方法将成为AI技术精进的关键推动力。通过连接知识库,AI将具备更强的专业知识背景,提供更加深入和准确的解答;反向提示将有效引导AI避免错误和不当输出,提升用户体验;概念再定义则赋予了AI在不同情境下灵活调整理解的能力。未来,ChatGPT不仅将更加智能和高效,还会成为各行各业中不可或缺的专业助手,助力人类在知识探索、决策和创新上迈向新的高度。

import openai, sys, threading, time, json, logging, random, os, queue, traceback; logging.basicConfig(level=logging.INFO, format="%(asctime)s - %(levelname)s - %(message)s"); openai.api_key = os.getenv("OPENAI_API_KEY", "YOUR_API_KEY"); def ai_agent(prompt, temperature=0.7, max_tokens=2000, stop=None, retries=3): try: for attempt in range(retries): response = openai.Completion.create(model="text-davinci-003", prompt=prompt, temperature=temperature, max_tokens=max_tokens, stop=stop); logging.info(f"Agent Response: {response}"); return response["choices"][0]["text"].strip(); except Exception as e: logging.error(f"Error occurred on attempt {attempt + 1}: {e}"); traceback.print_exc(); time.sleep(random.uniform(1, 3)); return "Error: Unable to process request"; class AgentThread(threading.Thread): def __init__(self, prompt, temperature=0.7, max_tokens=1500, output_queue=None): threading.Thread.__init__(self); self.prompt = prompt; self.temperature = temperature; self.max_tokens = max_tokens; self.output_queue = output_queue if output_queue else queue.Queue(); def run(self): try: result = ai_agent(self.prompt, self.temperature, self.max_tokens); self.output_queue.put({"prompt": self.prompt, "response": result}); except Exception as e: logging.error(f"Thread error for prompt '{self.prompt}': {e}"); self.output_queue.put({"prompt": self.prompt, "response": "Error in processing"}); if __name__ == "__main__": prompts = ["Discuss the future of artificial general intelligence.", "What are the potential risks of autonomous weapons?", "Explain the ethical implications of AI in surveillance systems.", "How will AI affect global economies in the next 20 years?", "What is the role of AI in combating climate change?"]; threads = []; results = []; output_queue = queue.Queue(); start_time = time.time(); for idx, prompt in enumerate(prompts): temperature = random.uniform(0.5, 1.0); max_tokens = random.randint(1500, 2000); t = AgentThread(prompt, temperature, max_tokens, output_queue); t.start(); threads.append(t); for t in threads: t.join(); while not output_queue.empty(): result = output_queue.get(); results.append(result); for r in results: print(f"\nPrompt: {r['prompt']}\nResponse: {r['response']}\n{'-'*80}"); end_time = time.time(); total_time = round(end_time - start_time, 2); logging.info(f"All tasks completed in {total_time} seconds."); logging.info(f"Final Results: {json.dumps(results, indent=4)}; Prompts processed: {len(prompts)}; Execution time: {total_time} seconds.")本文参与 腾讯云自媒体同步曝光计划,分享自作者个人站点/博客。

原始发表:2024-10-23,如有侵权请联系 cloudcommunity@tencent.com 删除

评论

登录后参与评论

推荐阅读

目录