总览

本文主要使用到 TIONE 的开发机和任务式建模两个模块:其中开发机用于下载模型和训练框架,并配置相关脚本;任务式建模用于启动训练任务。

指引的训练框架采用开源的 LLaMA-Factory:LLaMA Factory 是一个简单易用且高效的LLM训练与微调平台,它提供了不同阶段的 DeepSpeed 配置文件的示例(如 ZeRO-2/3等),同时预置了一些数据集,以支持开箱即用的训练流程。

注意到 DeepSpeed 支持使用 OpenMPI 格式的 hostfiles 来配置多节点计算资源,而任务式建模预置的 tilearn-llm0.9-torch2.3-py3.10-cuda12.4-gpu 镜像,支持 MPI 通信模式,故本文以该镜像为例,介绍如何使用 DeepSpeed 进行分布式训练。

前置要求

申请 CFS 或者 GooseFSx:在自定义大模型精调过程中模型文件、训练代码等使用到的存储为 CFS 或者 GooseFSx,所以需要您首先申请 CFS 或者 GooseFSx,详情请查看 文件存储-创建文件系统及挂载点 或者 数据加速器 GooseFS。

操作步骤

物料准备

说明:目前 TI-ONE 平台不支持在控制台直接进行数据上传操作,为了解决此问题,需要创建一个运维开发机以挂载 CFS 或者 GooseFSx 并使用开发机服务进行上传或下载大模型、训练代码等文件。

单击训练工坊 > 开发机 > 新建按钮,创建调试训练代码用的开发机,

镜像:选择任意内置镜像即可,本开发机实例仅用于下载模型文件以及训练代码。

机器来源:选择从TIONE平台购买按量计费模式,若您需要从CVM机器中选择或包年包月,请参考资源组管理管理您的机器资源。

计费模式:选择按量计费或包年包月均可,平台支持的计费规则请您查看计费概述。

存储配置:选择 CFS 或者 GooseFSx 文件系统,名称为上文前置要求中申请配置好的 CFS 或者 GooseFSx,路径默认为根目录 /,用于指定保存用户自定义大模型位置。

其它设置:默认不需要填写

最终开发机服务配置如下:

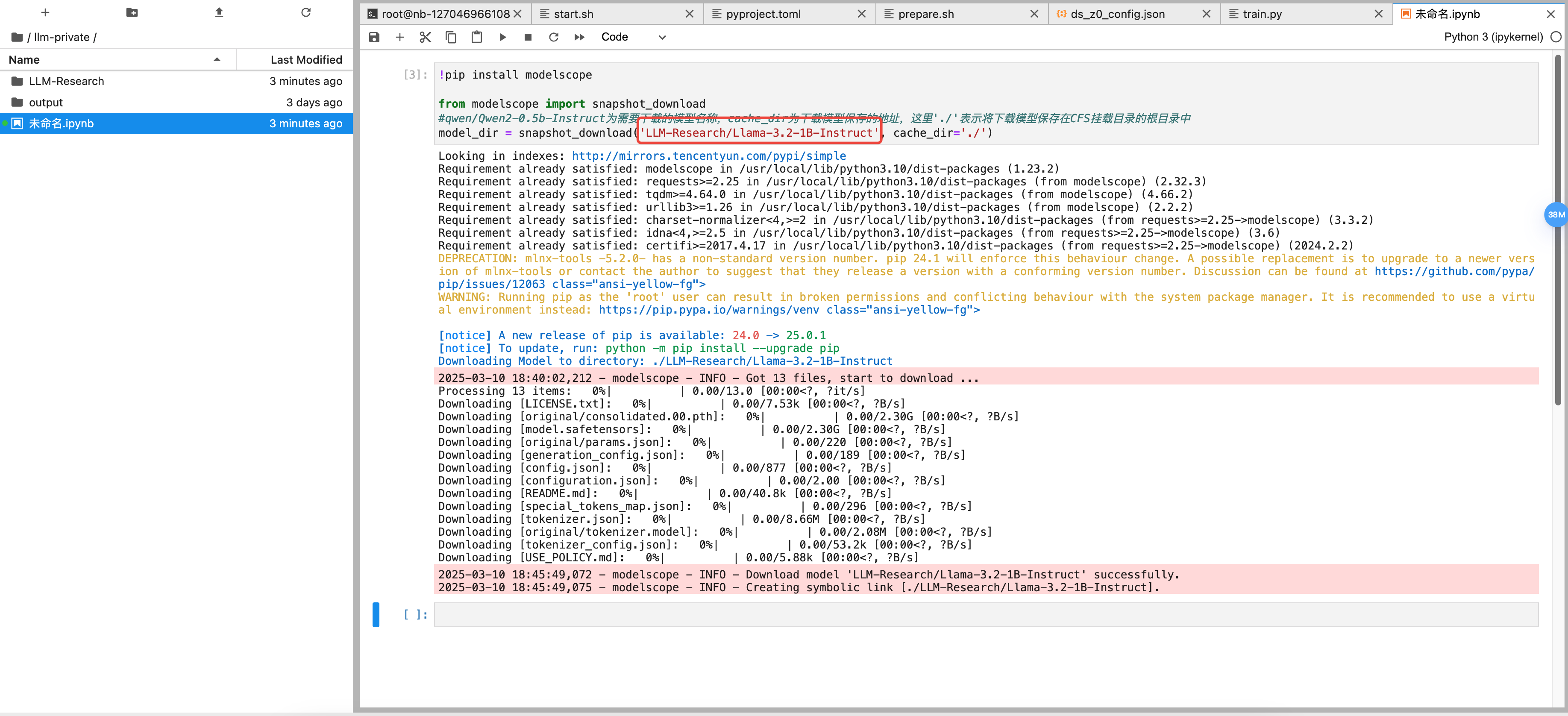

模型文件

新建成功后,单击开发机 > Python3(ipykernel) 通过脚本下载所需模型;您可在 魔搭社区 或 Hugging Face 检索需要用到的大模型,通过社区中 Python 脚本自行下载模型并保存到 CFS 或者 GooseFSx 中,本文以【Llama-3.2-1B-Instruct】模型为例,下载代码如下:

!pip install modelscopefrom modelscope import snapshot_download#LLM-Research/Llama-3.2-1B-Instruct为需要下载的模型名称,cache_dir为下载模型保存的地址,这里'./'表示将下载模型保存在CFS挂载目录的根目录中model_dir = snapshot_download('LLM-Research/Llama-3.2-1B-Instruct', cache_dir='./')

说明:指定下载模型的地址 cache_dir 在后续任务式建模中的位置为挂载开发机的 path+cache_dir,例如:挂载开发机的路径为 /dir1,cache_dir 为 /dir2,则文件在 CFS 或者 GooseFSx 中的位置为/dir1/dir2。

复制上述下载脚本并更换需要下载的模型后,粘贴到新建的 ipynb 文件中,单击运行按钮即可开始下载模型;

训练代码

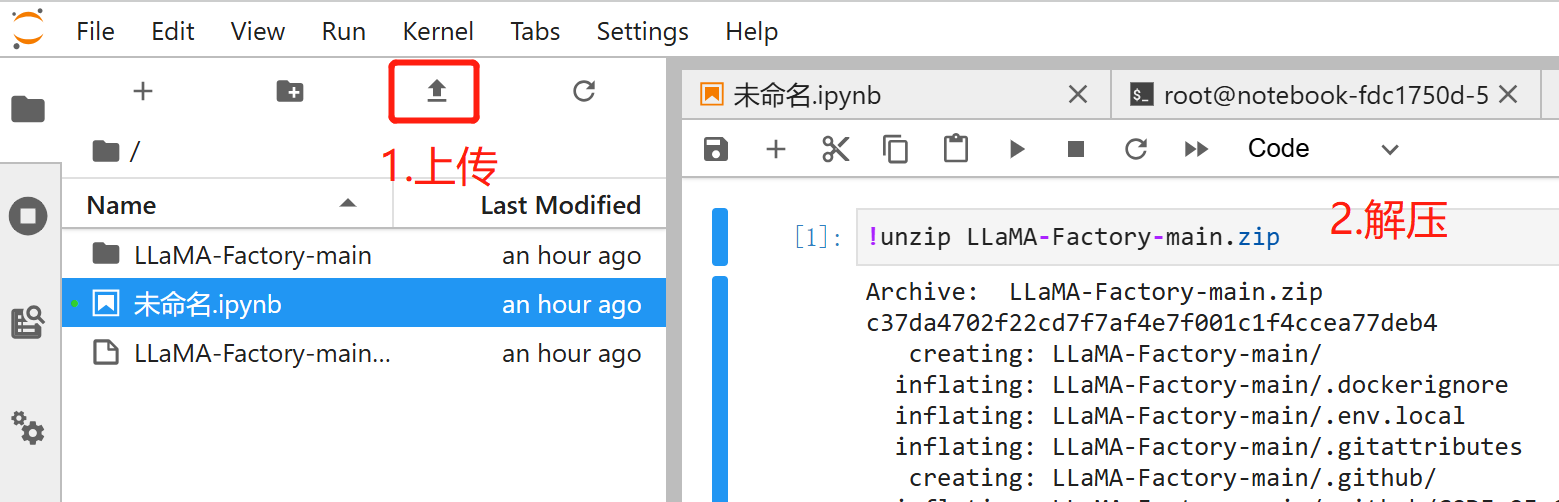

下载 LLaMA-Factory 代码

直接通过 git clone 下载到 CFS 中,单击 Terminal 输入如下命令,

git clone https://github.com/hiyouga/LLaMA-Factory.git

如果您创建时打开了"SSH 连接",您还可以使用 scp 将本地物料上传至开发机。

scp -r -P <port> Llama-3.2-1B-Instruct root@host:/home/tione/notebook/workspace

TIPS: 如您的文件由 git 下载,您可以尝试删除目录下的 .git 目录,可减少上传的文件,提升上传速度。

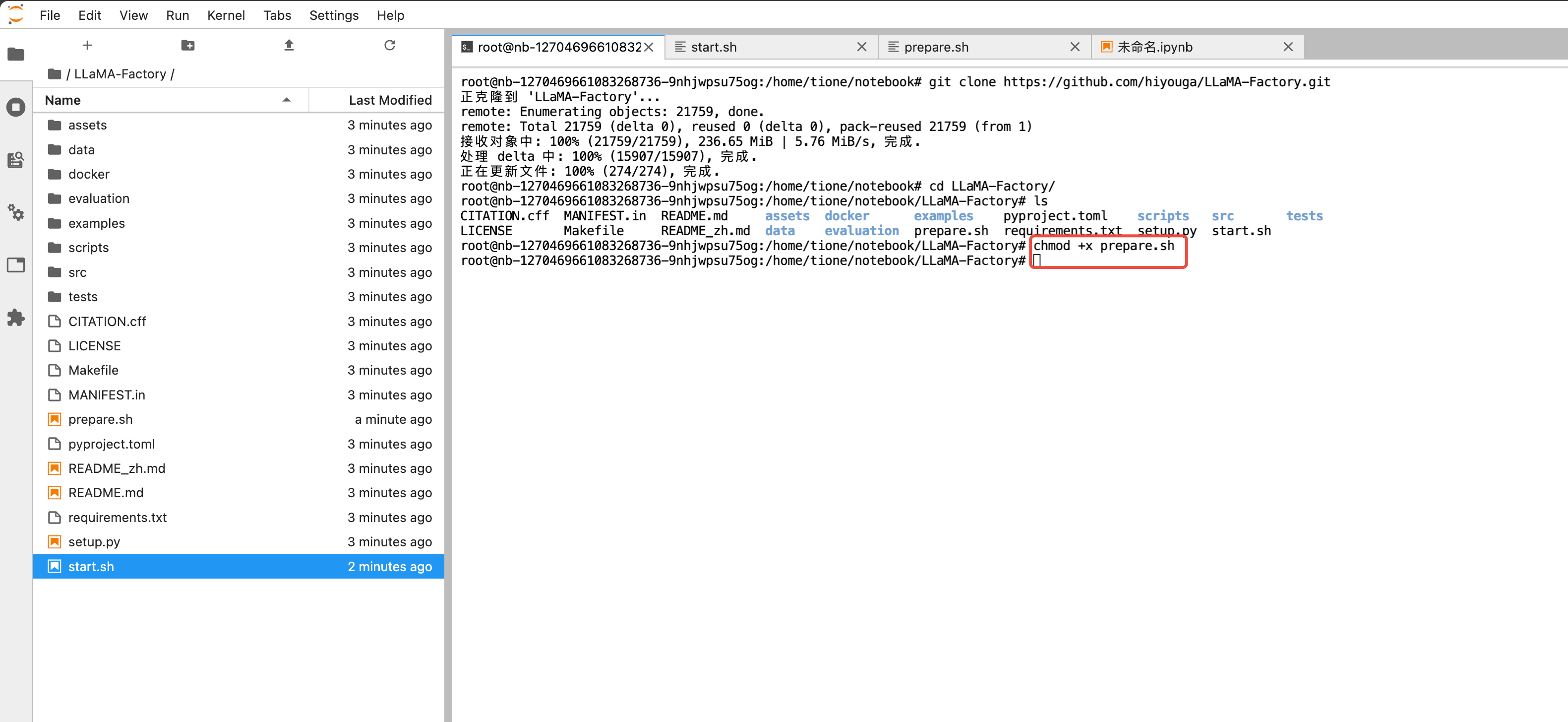

创建依赖安装脚本

使用 DeepSpeed 需要特定的 transformer 版本,故我们直接使用 pip 安装最新的 DeepSpeed 和相应的 transformer。

同时在多机情况下,我们需要在每台机器安装或更新依赖,可以通过 mpirun 来执行此操作。mpirun 是 openmpi 的命令行工具,它提供了一种简单的方式来并行启动应用程序。

在 LLaMA-Factory 目录内创建一个 prepare.sh 文件,作为依赖安装脚本,内容如下:

#!/bin/bashpip3 install -U accelerate==1.0.1 transformers deepspeed -i https://mirrors.tencent.com/pypi/simple

并在终端通过 chmod 给予可执行权限。

创建启动脚本

在 LLaMA-Factory 目录内创建一个 start.sh 文件,作为训练启动脚本,内容如下:

#!/bin/bash# 安装依赖# pip3 install -U accelerate==1.0.1 transformers deepspeed -i https://mirrors.tencent.com/pypi/simple# mpi 模式启动 deepspeed 分布式训练# 参考:https://llamafactory.readthedocs.io/zh-cn/latest/advanced/distributed.html#id18deepspeed --num_gpus $GPU_NUM_PER_NODE --num_nodes 2 --hostfile /etc/mpi/hostfile src/train.py \\--deepspeed examples/deepspeed/ds_z0_config.json \\--stage sft \\--model_name_or_path /opt/ml/pretrain_model \\--do_train \\--dataset identity \\--template llama3 \\--finetuning_type full \\--output_dir /llm-private/output \\--overwrite_cache \\--per_device_train_batch_size 1 \\--gradient_accumulation_steps 8 \\--lr_scheduler_type cosine \\--logging_steps 10 \\--save_steps 500 \\--learning_rate 1e-4 \\--num_train_epochs 10 \\--plot_loss \\--bf16

其中 num_nodes 可以根据实际节点数目调整,$GPU_NUM_PER_NODE 为任务式建模注入的环境变量表示每个节点的 GPU 数量。

/etc/mpi/hostfile 会由任务式建模创建

model_name_or_path 为模型目录,我们在启动训练时,需要进行对应挂载。

template 根据模型对应修改

任务式建模启动分布式精调任务

创建训练任务

单击训练工坊 > 任务式建模 > 创建任务按钮,训练镜像选择内置镜像如下,训练模式选择“MPI”,资源配置按需选择。在此指引中,我们进行2节点分布式训练。

训练镜像:选择内置镜像/PyTorch/tilearn-llm0.9-torch2.3-py3.10-cuda12.4-gpu,该镜像已默认配置了大模型训练运行时环境。

机器来源:参考开发机 > 算力资源。

算力规格:请合理选择训练资源,我们选择每个节点1个GPU(分配资源为0.5卡,但是表现为一个GPU),并使用2个节点。

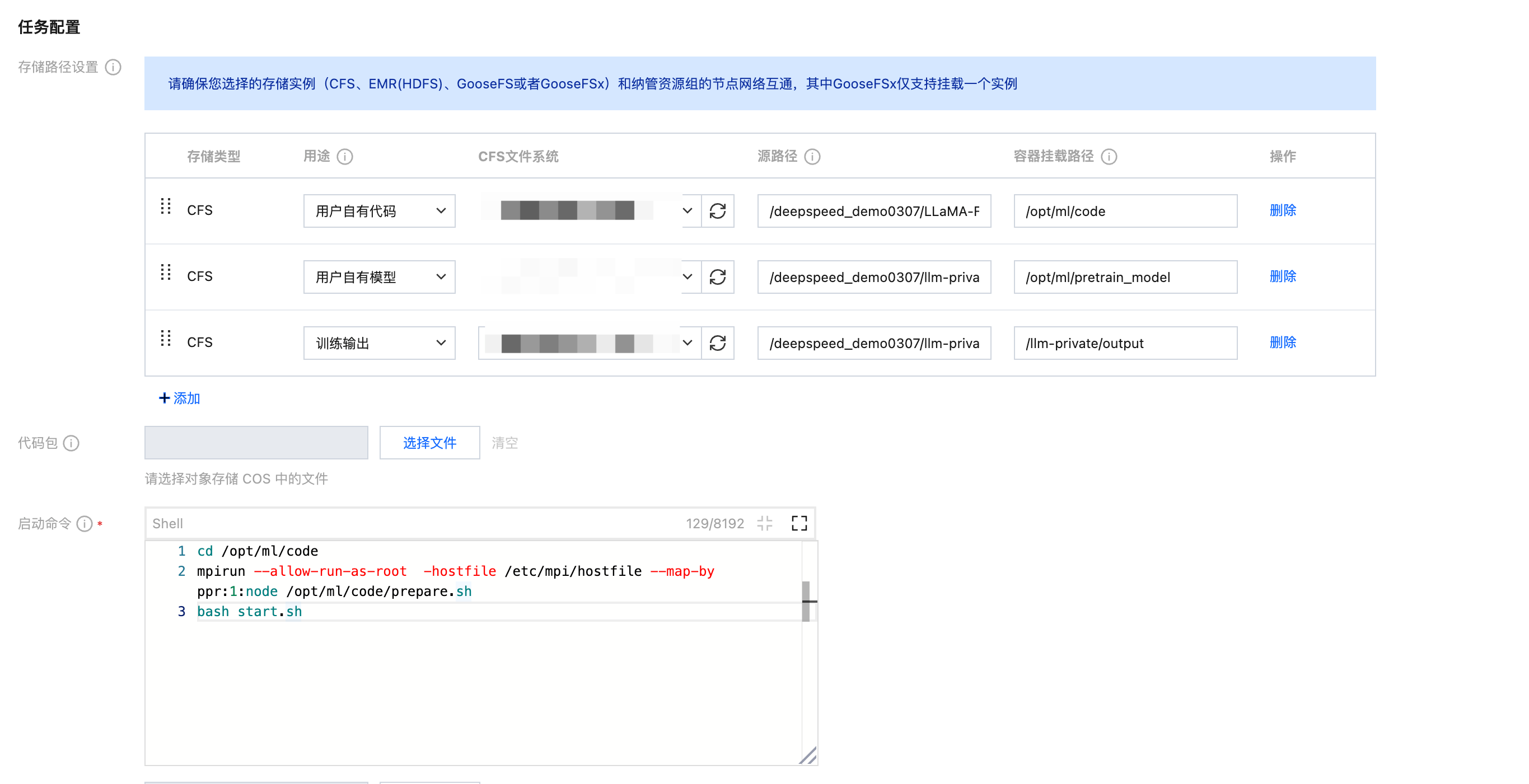

存储路径配置:这里需要挂载的内容有训练代码、预训练模型与训练输出路径,其中容器的挂载路径对应上文训练配置文件中的相关参数(您也可将训练代码、预训练模型与训练输出放在同一个大目录下,并将根目录挂入容器,最后修改启动命令 cd 到 LLaMA-Factory 下即可)。

启动命令:(我们先借助 mpirun 执行依赖安装脚本,然后再启动训练):

cd /opt/ml/codempirun --allow-run-as-root -hostfile /etc/mpi/hostfile --map-by ppr:1:node /opt/ml/code/prepare.shbash start.sh

查看训练状态

任务启动后,可以单击日志查看训练日志等信息。

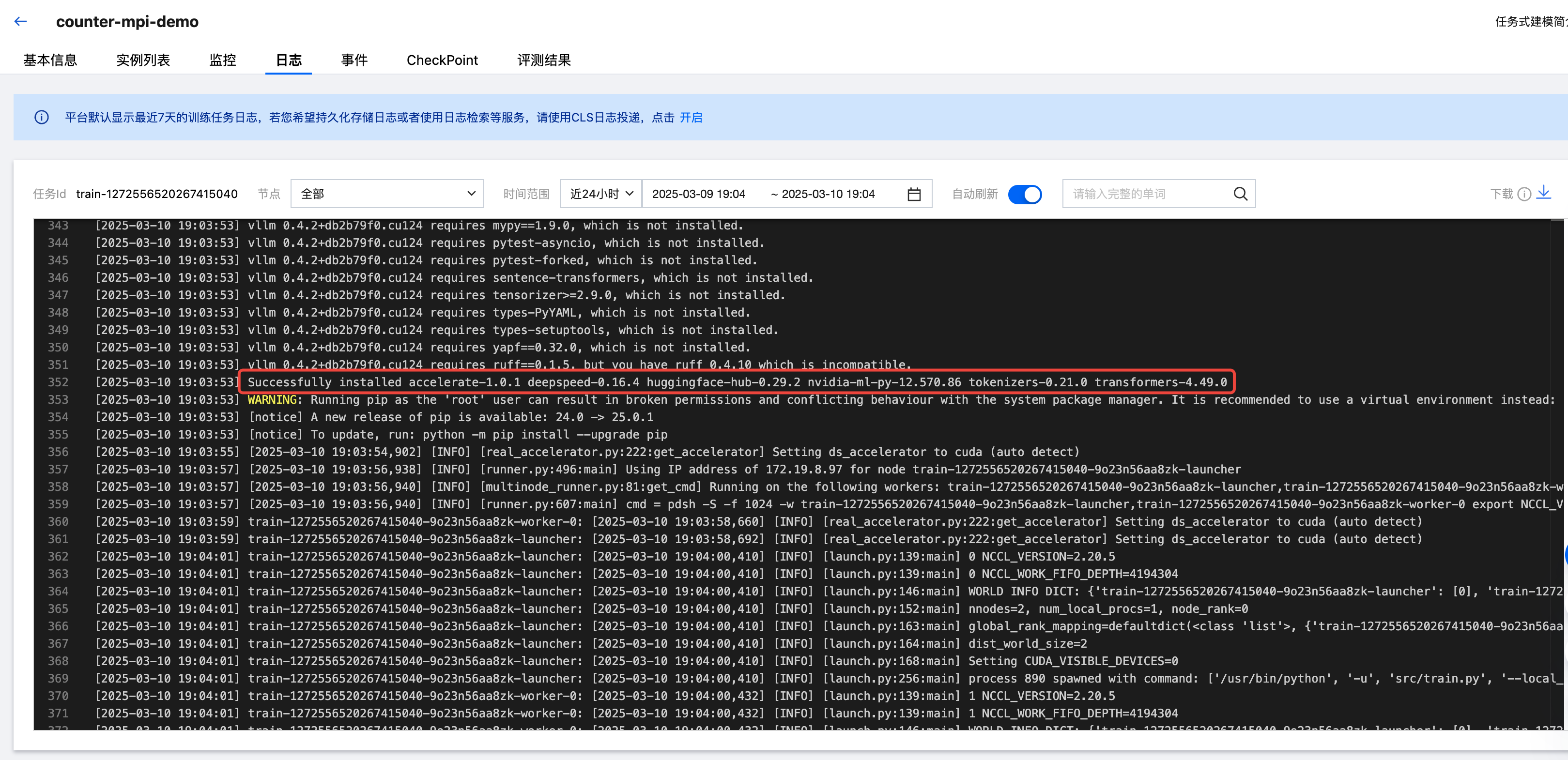

进行依赖安装

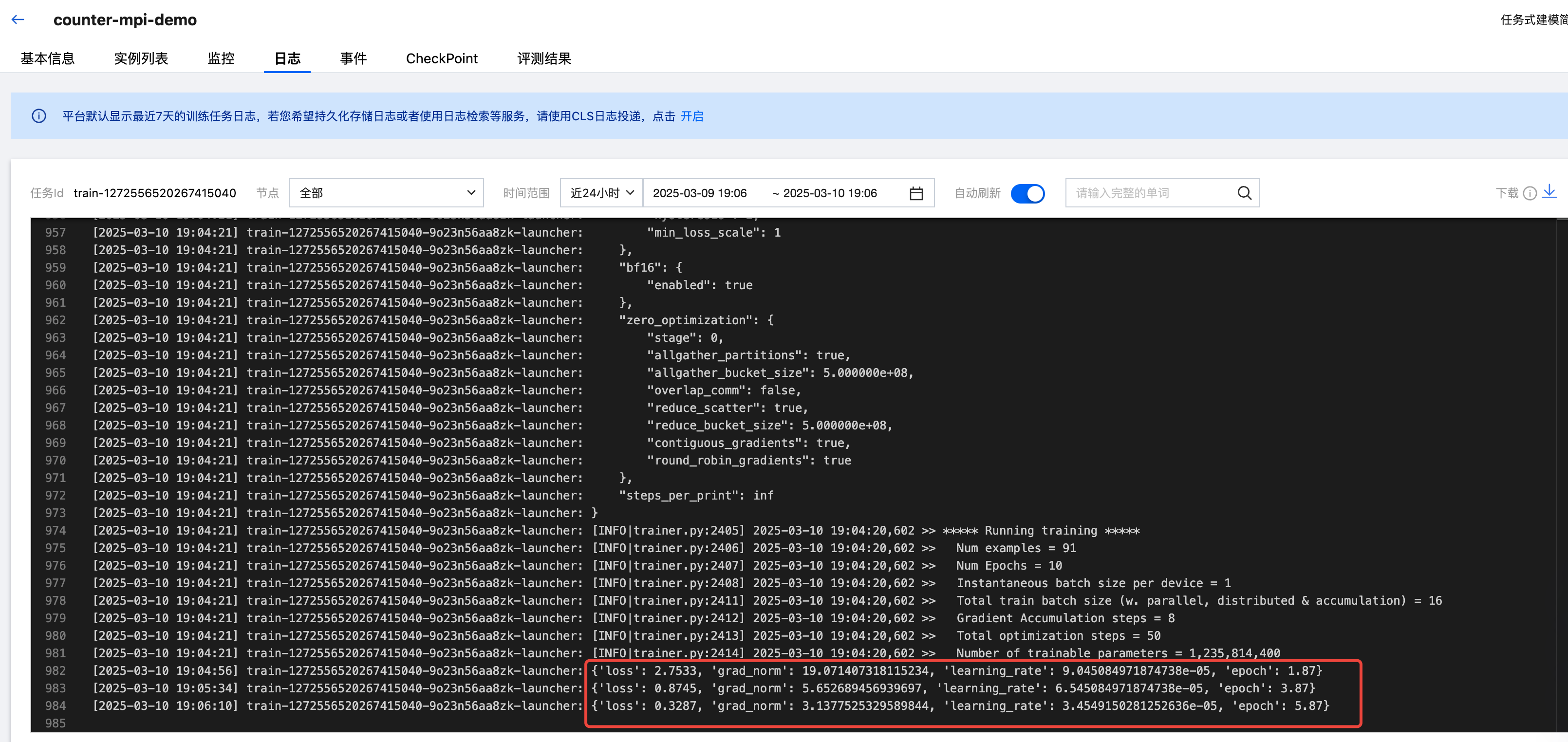

训练中:

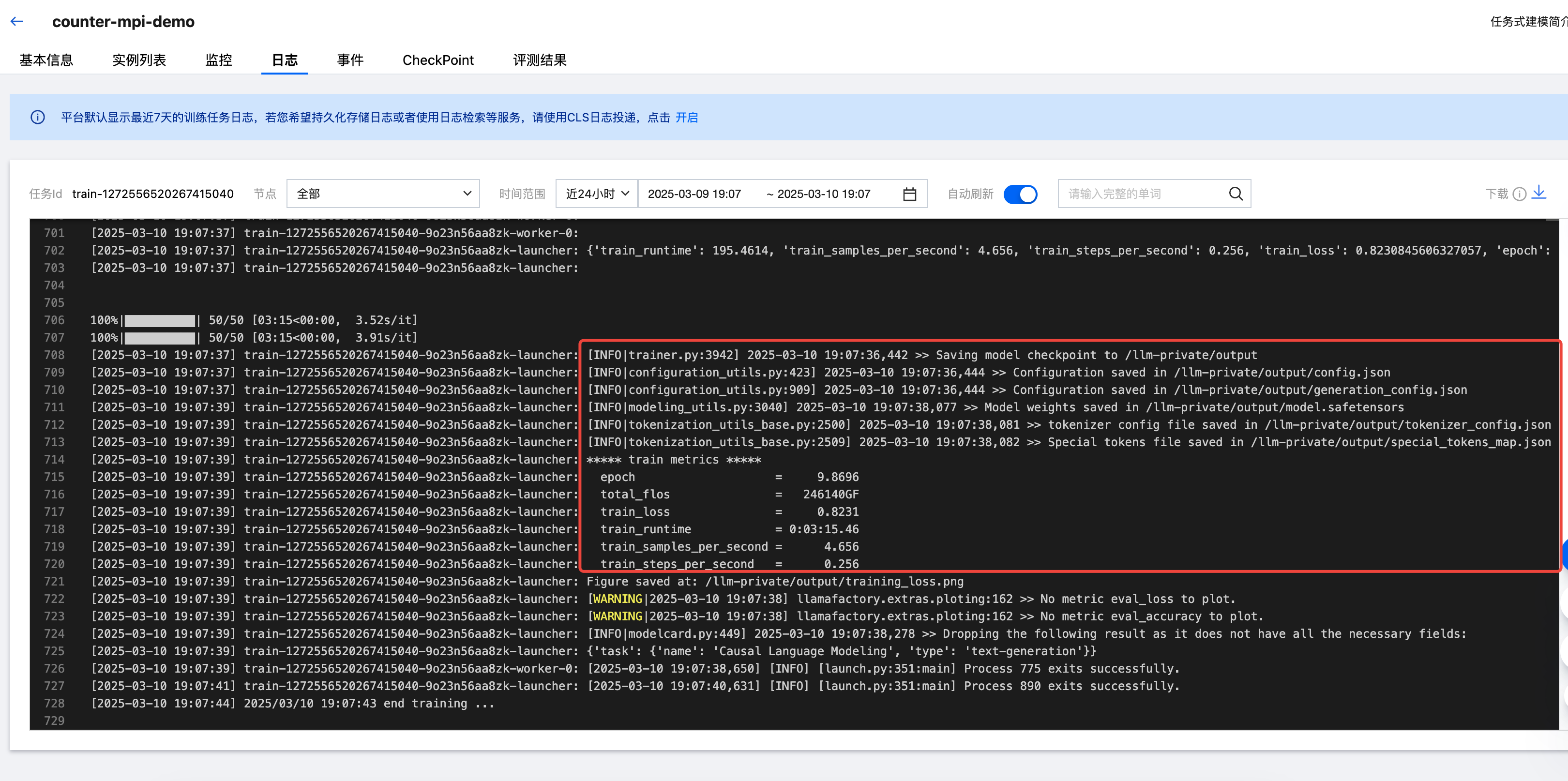

训练结束后,会将最终的 checkpoint 保存到挂载的输出目录 output 中,该目录用于后续部署精调后的模型。

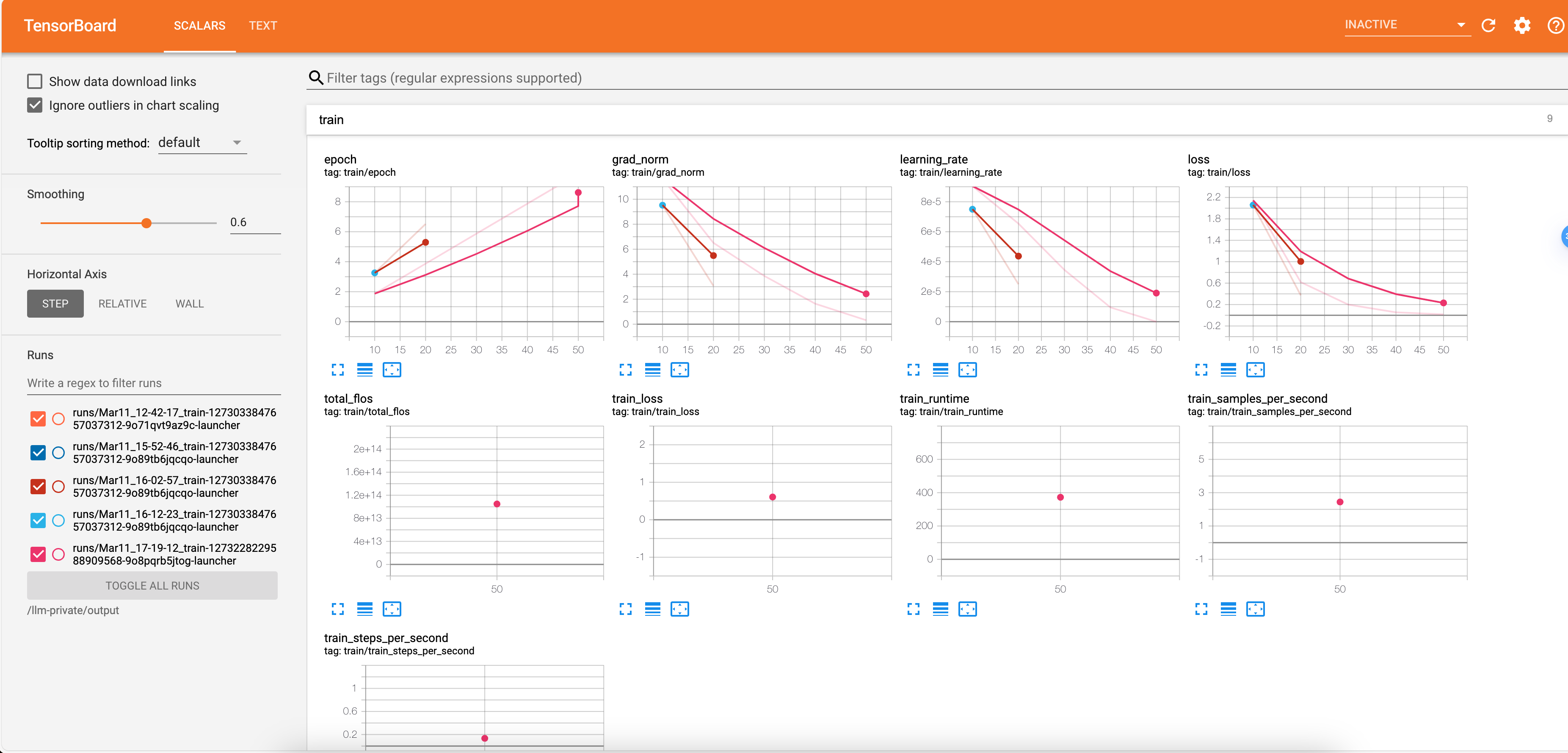

TensorBoard

此外,您还可以单击"任务列表 > 操作栏中的 Tensorboard 按钮",配置 Tensorboard 任务,路径为 CFS 中模型训练的输出路径/project/output。

确定后即可查看训练过程损失梯度等状态。